FlavorFinder

Client

N/A

Year

2025

Tag

UI/UX

Duration

Ongoing

Client

N/A

Year

2025

Tag

UI/UX

Duration

Ongoing

The project itself :

FlavorFinder is a web-based restaurant discovery platform that simplifies the process of finding places to eat through conversational search. Instead of scrolling through long lists of restaurants, users can describe what they are craving and receive personalized recommendations powered by AI.

The platform also supports smaller restaurants by partnering with them to provide richer menu data and increased visibility.

Restaurant discovery platforms often overwhelm users with too many options and rigid filtering systems, making it difficult to quickly decide where to eat.

Design a conversational discovery platform that simplifies restaurant search while helping smaller restaurants gain visibility through richer menu data and featured placement.

UX/UI Designer responsible for product concept, user research synthesis, interaction design, and interface design.

Defined the product concept and discovery workflow

Designed conversational search interactions

Developed wireframes and high-fidelity interface designs

Created the information architecture and user flows

iterating on designs,

making high-fidelity prototype

All about the user :

Research focused on understanding how users currently search for restaurants and where friction occurs in the discovery process. Users frequently experience decision fatigue when browsing long lists of options and struggle to filter results based on nuanced preferences.

These insights highlighted an opportunity to simplify discovery through conversational interactions.

Users experience decision fatigue when browsing large restaurant directories

Traditional filters do not capture nuanced cravings or dietary preferences

Smaller restaurants struggle to gain visibility on large platforms

Replace complex filtering with conversational search

Highlight small or local restaurants through featured partnerships

Use menu-level data to generate better recommendations

The platform primarily serves two user groups: diners looking for faster restaurant discovery and small restaurant owners seeking increased visibility. Users often search for restaurants in group settings where multiple preferences must be considered.

It is the series of experiences Carlos has as he achieve a specific goal. It was built on the his experience.

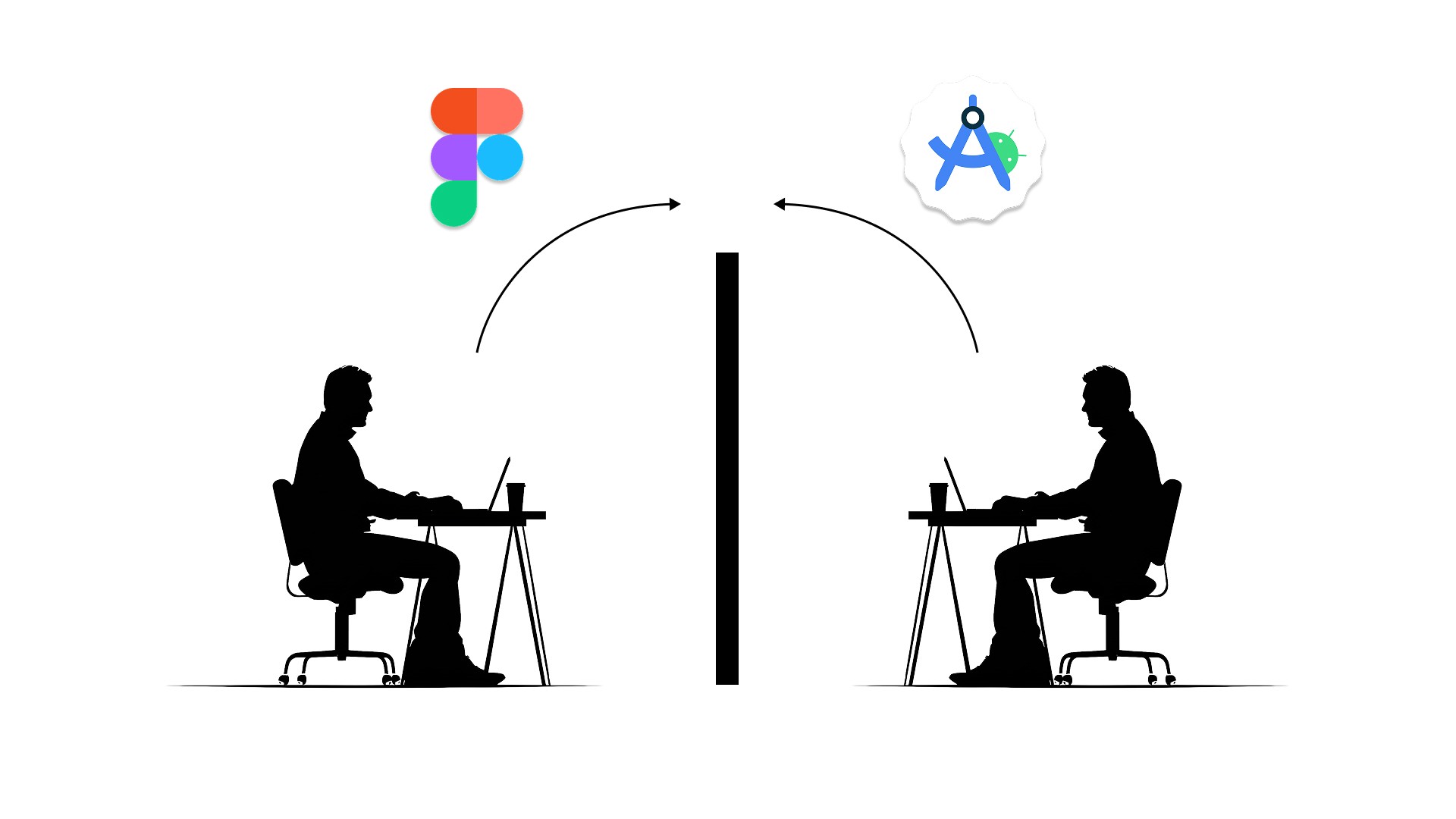

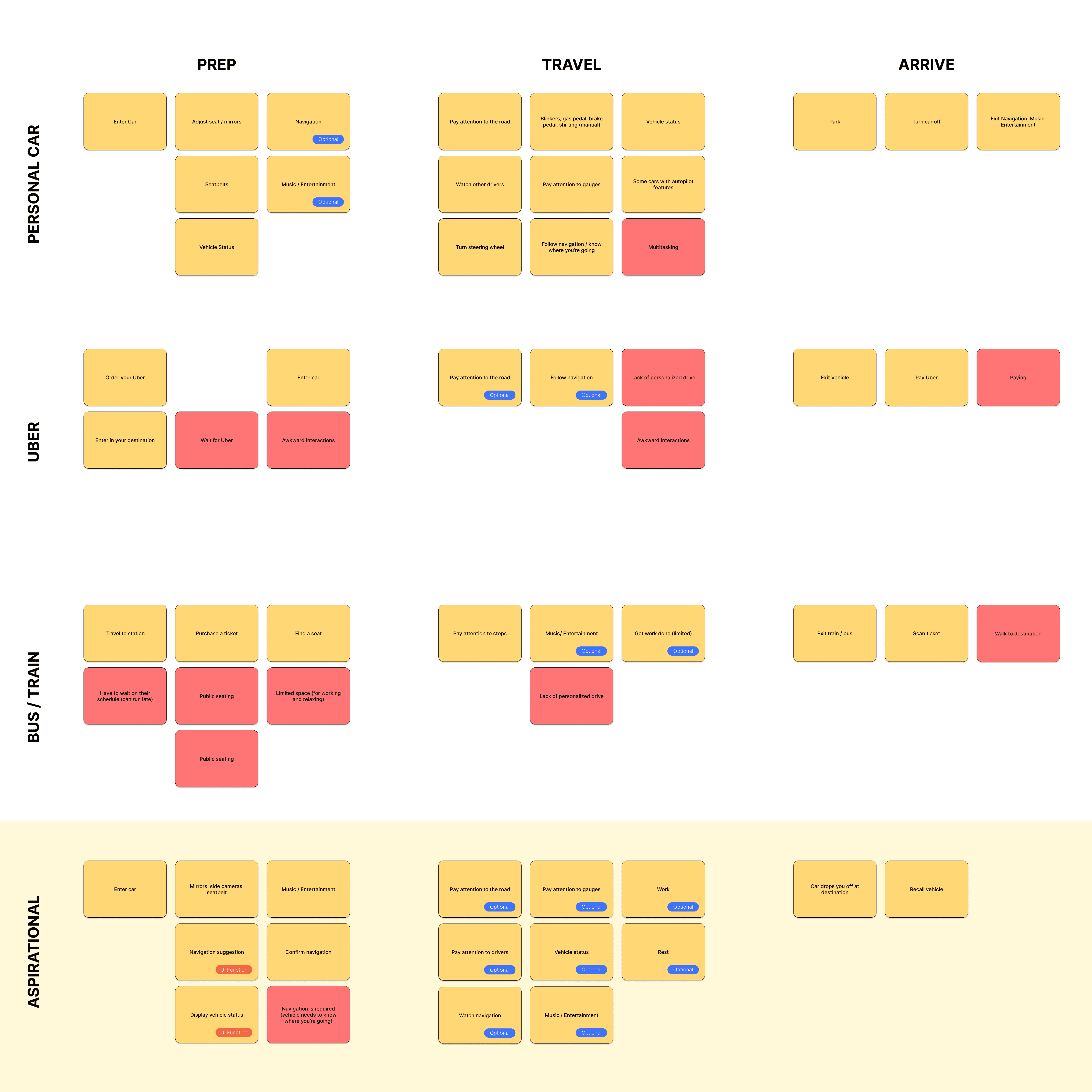

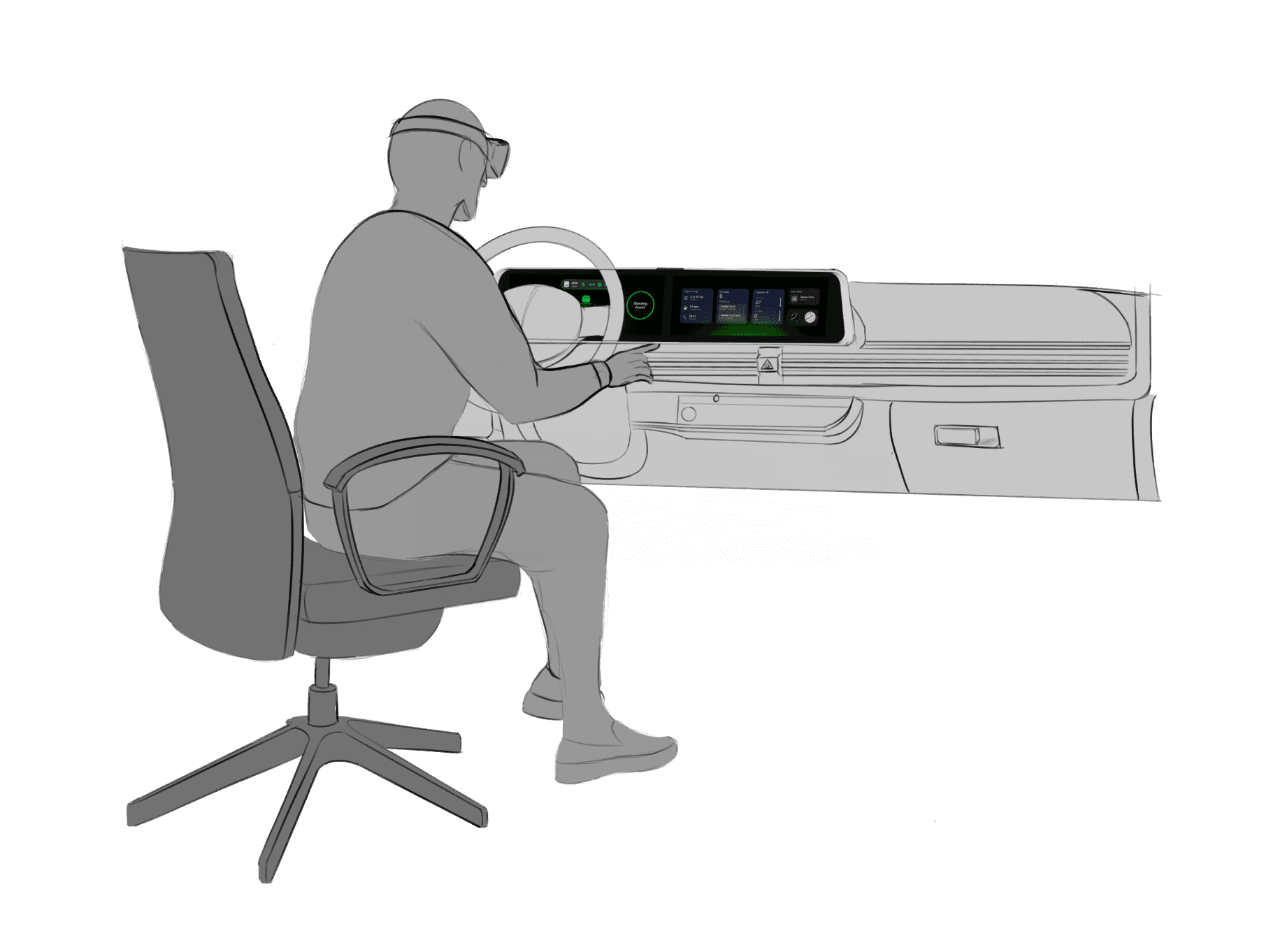

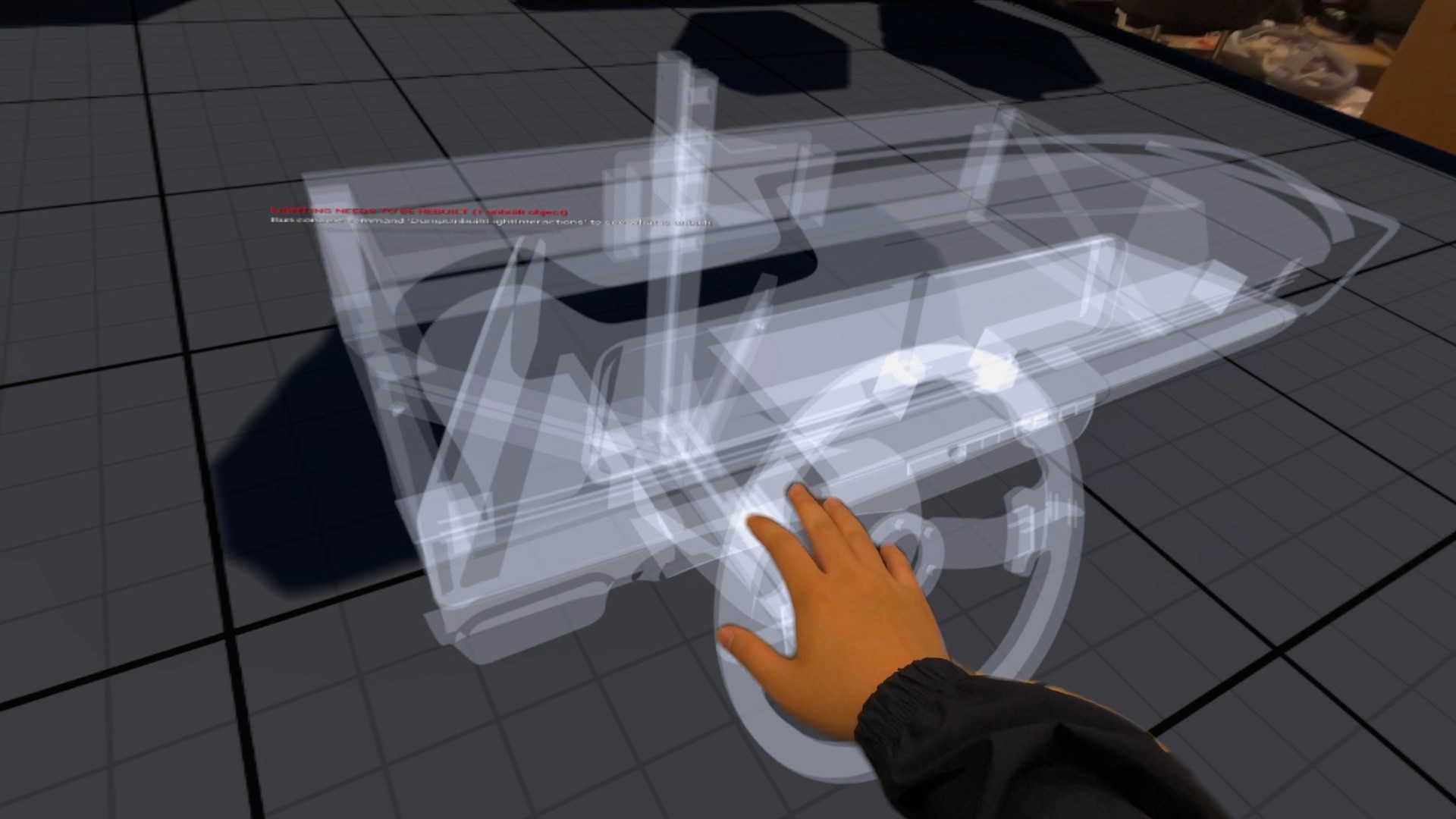

Existing infotainment development workflows are spread across disconnected tools and disciplines, creating friction during iteration and review. Interface concepts are often evaluated without accurate physical context, making it difficult to assess spatial relationships, reach, and visibility early in the process. As a result, feedback cycles slow down and misalignment between teams can persist until later stages of development.

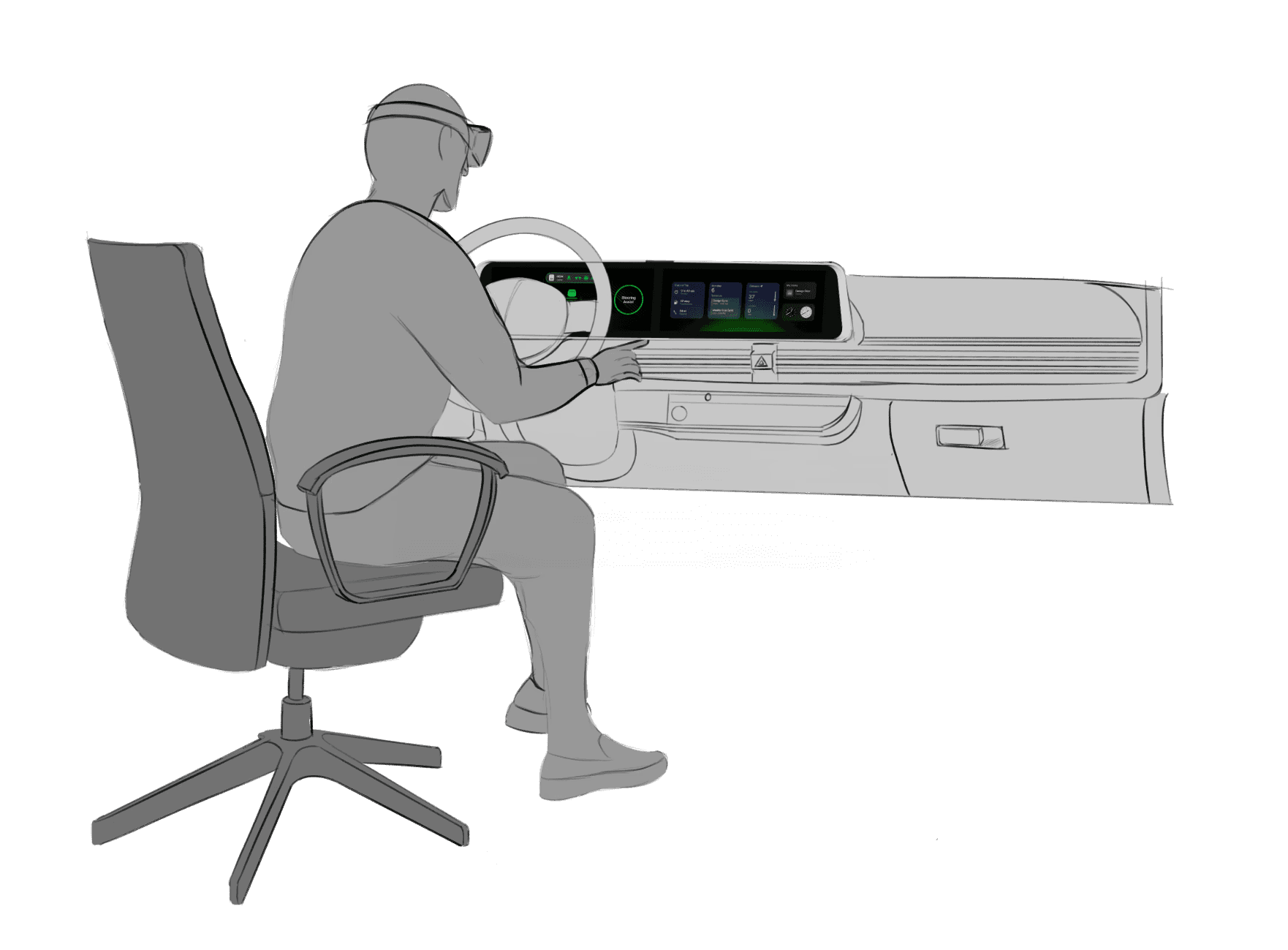

A spatially accurate reference environment to evaluate layout, scale, and visual hierarchy in context

Clear alignment between digital interface behavior and physical hardware constraints

It is the series of experiences Carlos has as he achieve a specific goal. It was built on the his experience.

I developed a user journey map of Carlos's experience with the app to highlight potential pain points and identify areas for improvement.

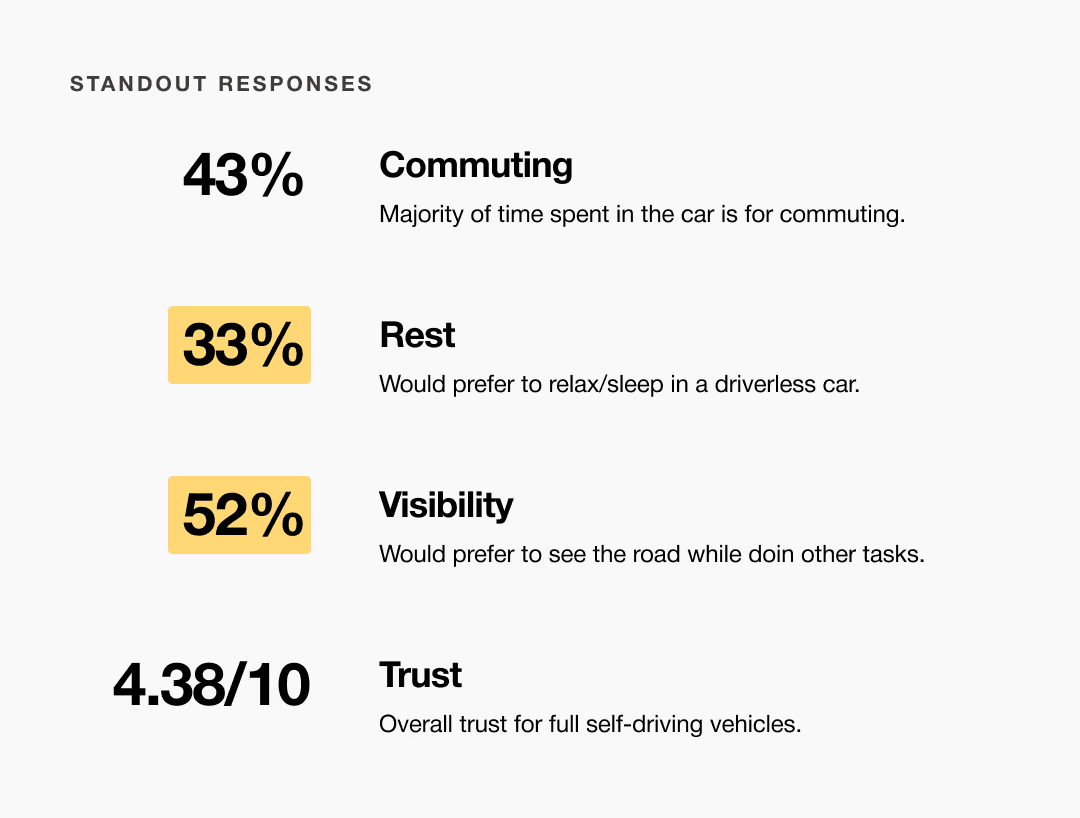

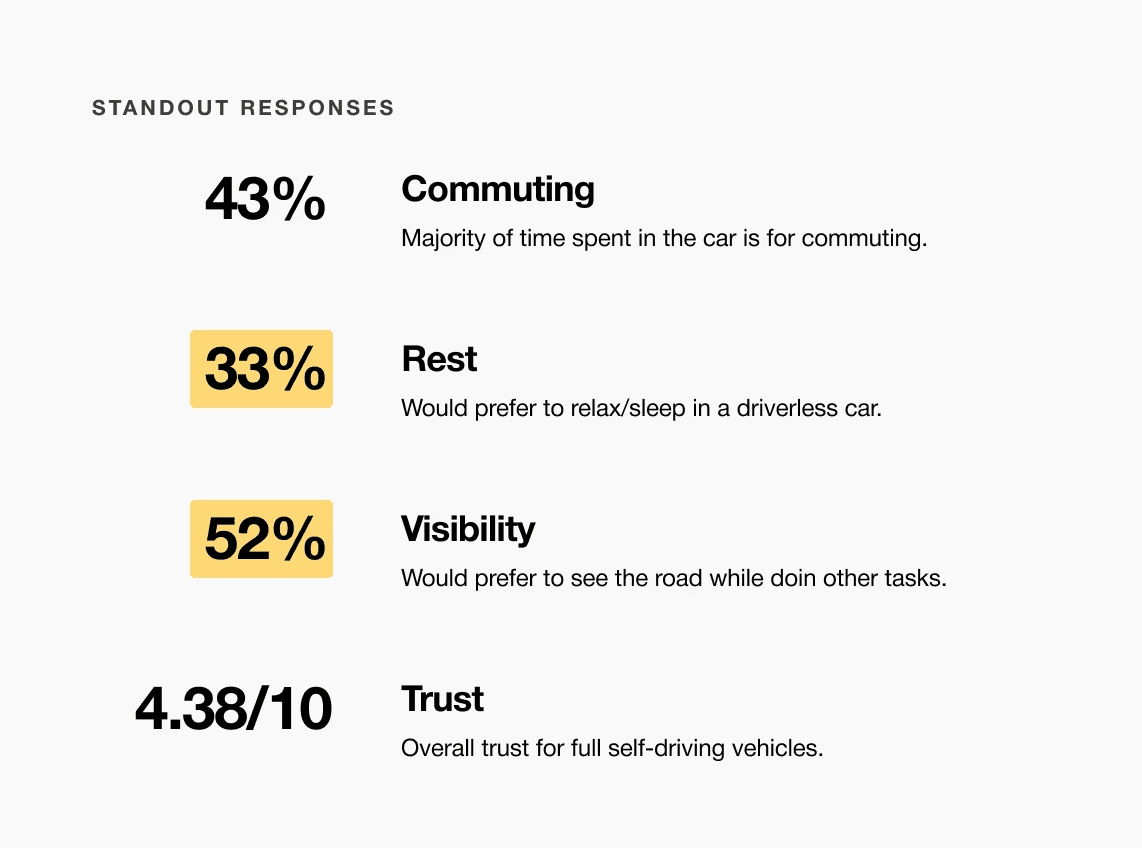

After figuring out our user group, we created a survey for Gen-Z commuters to help draw out insights that might lead to design opportunities. This survey was equipped with two different types of questions:

Current driving questions

Future thinking, Level 5 questions

It is the series of experiences Carlos has as he achieve a specific goal. It was built on the his experience.

I developed a user journey map of a typical user's experience with searching for food to pinpoint potential pain points and identify areas for improvement.

The ideal journey begins with a user describing what they want to eat through conversational search. The system interprets their request and returns tailored recommendations that help users quickly decide where to eat.

A slight majority of surveyors would like to rest in a driverless car, but getting additional work done was a close second.

Visibility to the road is important to surveyors, so the HUD should not clutter their view.

Commute time often feels underutilized or disconnected from passengers’ goals

It is the series of experiences Carlos has as he achieve a specific goal. It was built on the his experience.

FlavorFinder transforms restaurant discovery from a frustrating browsing experience into a guided conversation. By combining AI search with detailed restaurant data, the platform helps users find food faster while increasing visibility for local businesses.

Choose a good movie in a cinema theatre nearby and select seats in an app in a fast and clear way

It is the series of experiences Carlos has as he achieve a specific goal. It was built on the his experience.

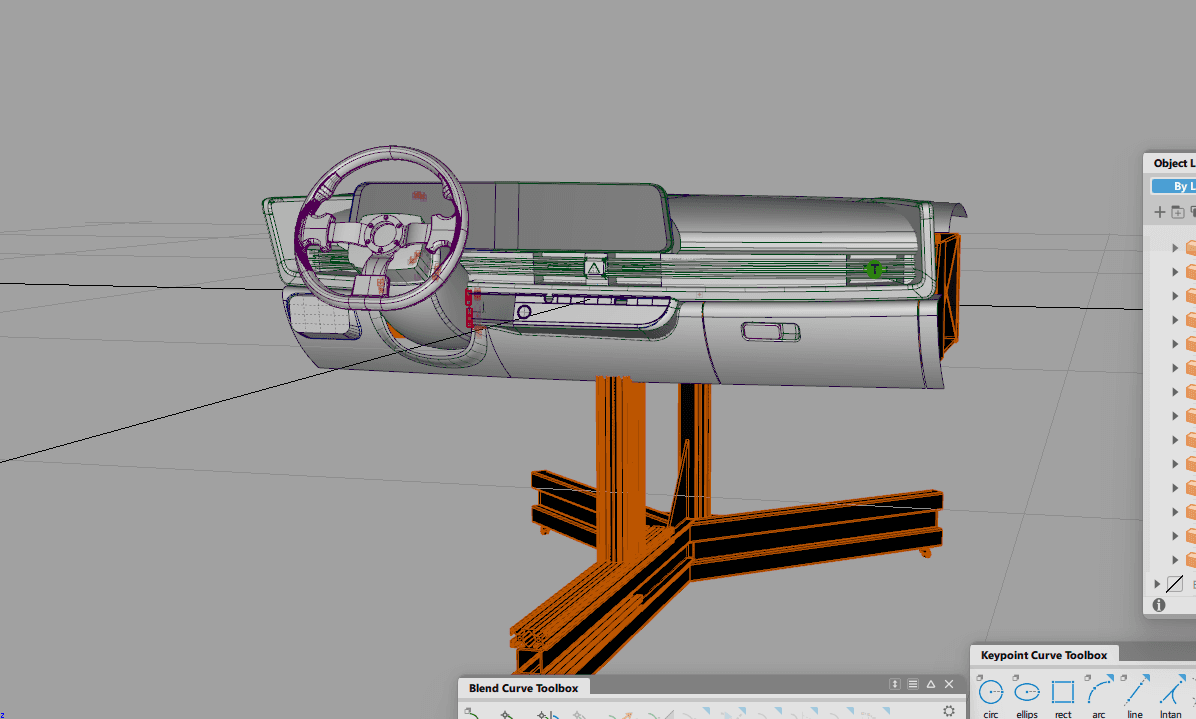

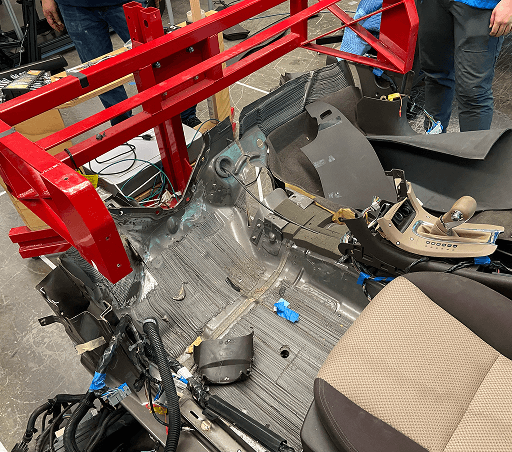

Accurate alignment between physical and digital environments was critical to the success of this system. Scan data required careful reconstruction to preserve production vehicle proportions while meeting fabrication and simulator constraints. Considerations such as driver eye point, seating position, reach zones, and mounting tolerances informed how the digital model was engineered and how physical components were integrated, reinforcing the need for a tightly coupled physical and digital reference.

Choose a good movie in a cinema theatre nearby and select seats in an app in a fast and clear way

The project schematically :

Early design exploration focused on translating conversational search into a clear interface structure. The challenge was to balance the AI chat interaction with familiar browsing elements so users still felt in control of the discovery process.

Concept sketches explored different homepage layouts and search entry points.

The series of hand-drawing frames that visually describe and explore a user's experience with a product.

I began with drawing storyboards to focus on just the most important parts of a user’s experience with the app. It's a story showing a group a friends trying to figure out where they should go eat, and ultimately using FlavorFinder to get detailed recommendations on a nice local restaurant.

Big picture storyboard, which focuses on the user experience. It's about how people will use the Voo's app during their day and it will be useful.

Close-up storyboard focuses on the app instead of on the user experiencing that product. Shows what happens on each screen of the app.

We were able to determine three zone of legibility when testing text elements on the HUD. From here we started creating an information hierarchy based on these zones to identify what should be displayed where.

When testing, users reported eye fatigue when there were large blocks of bright colors on the HUD. We pivoted to muted/ transparent elements in later designs.

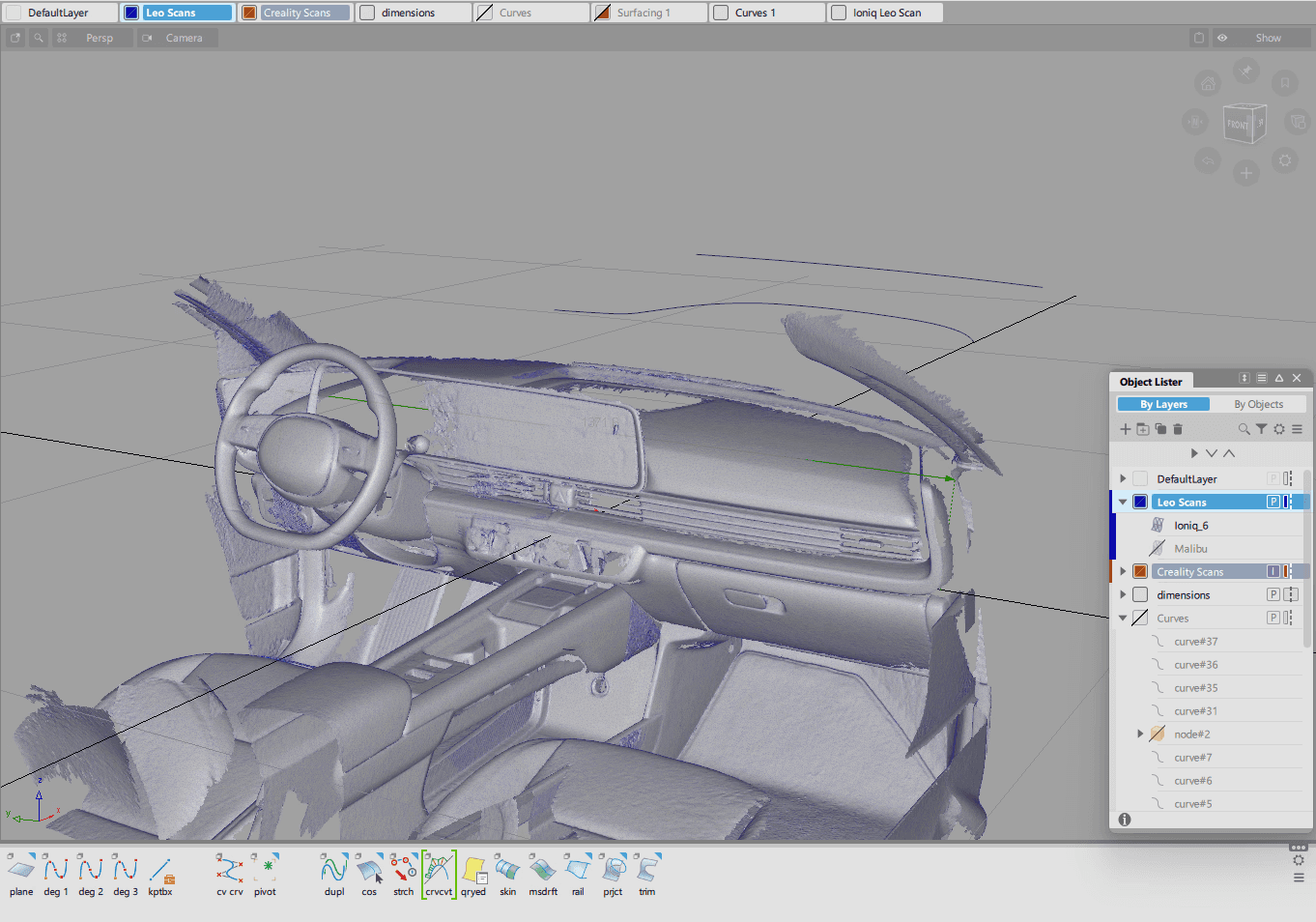

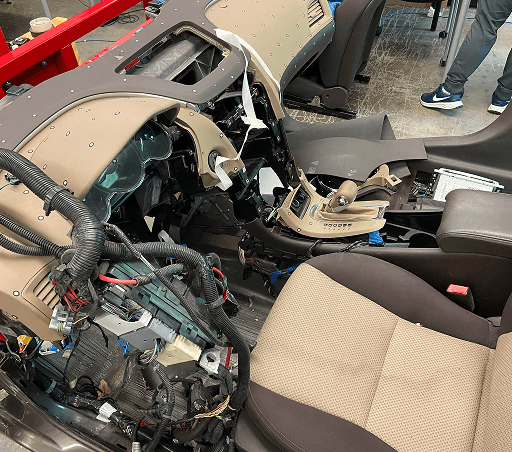

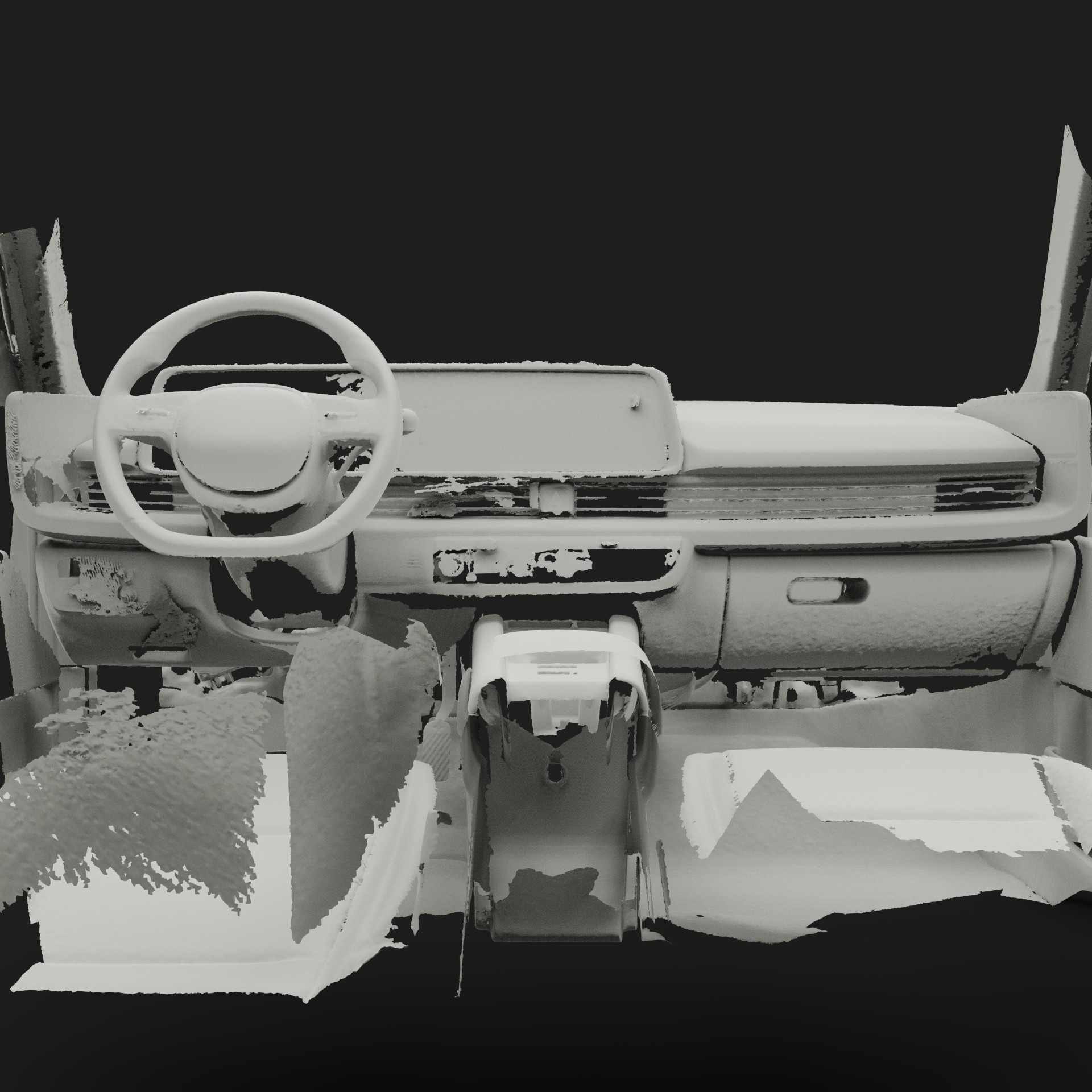

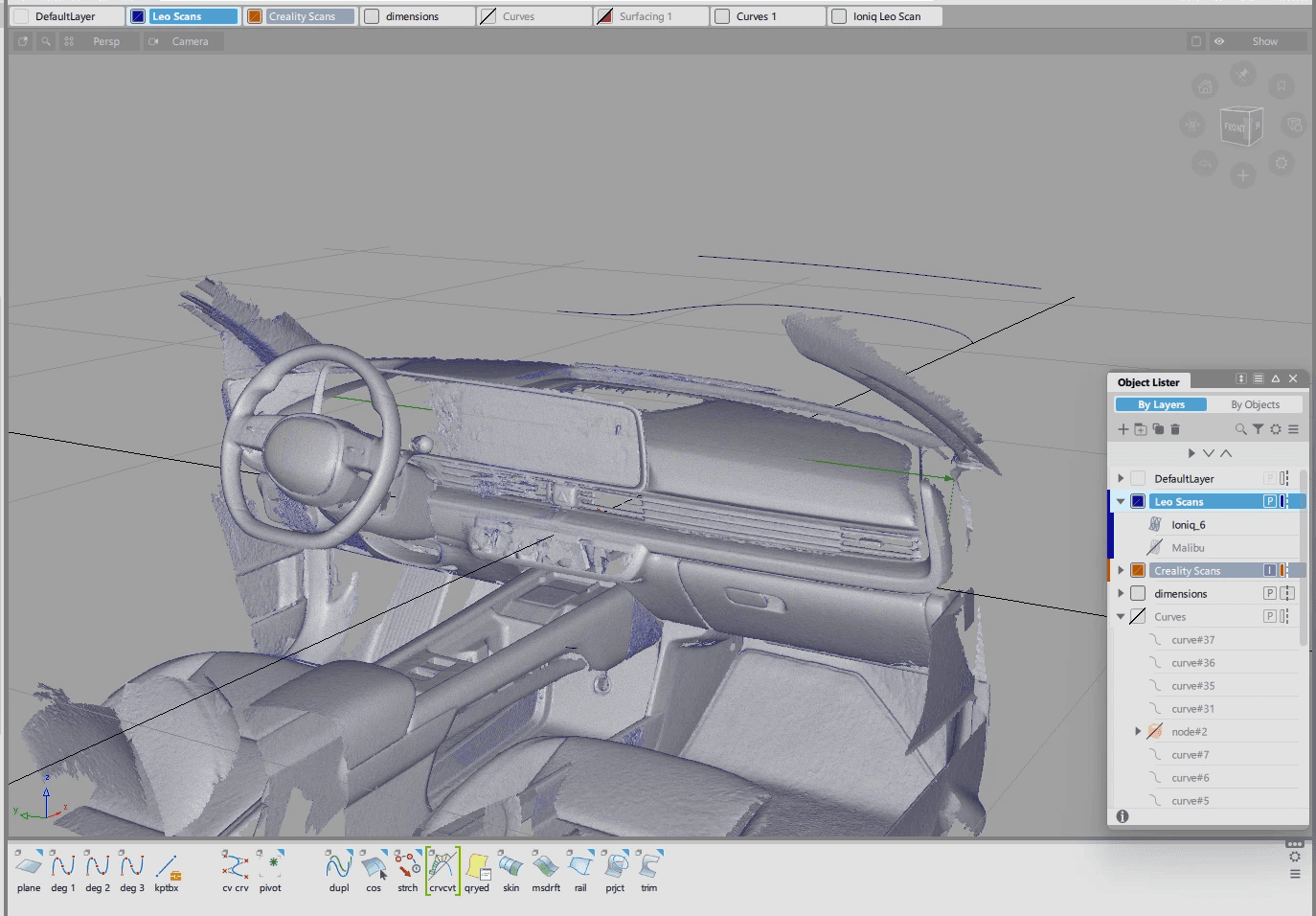

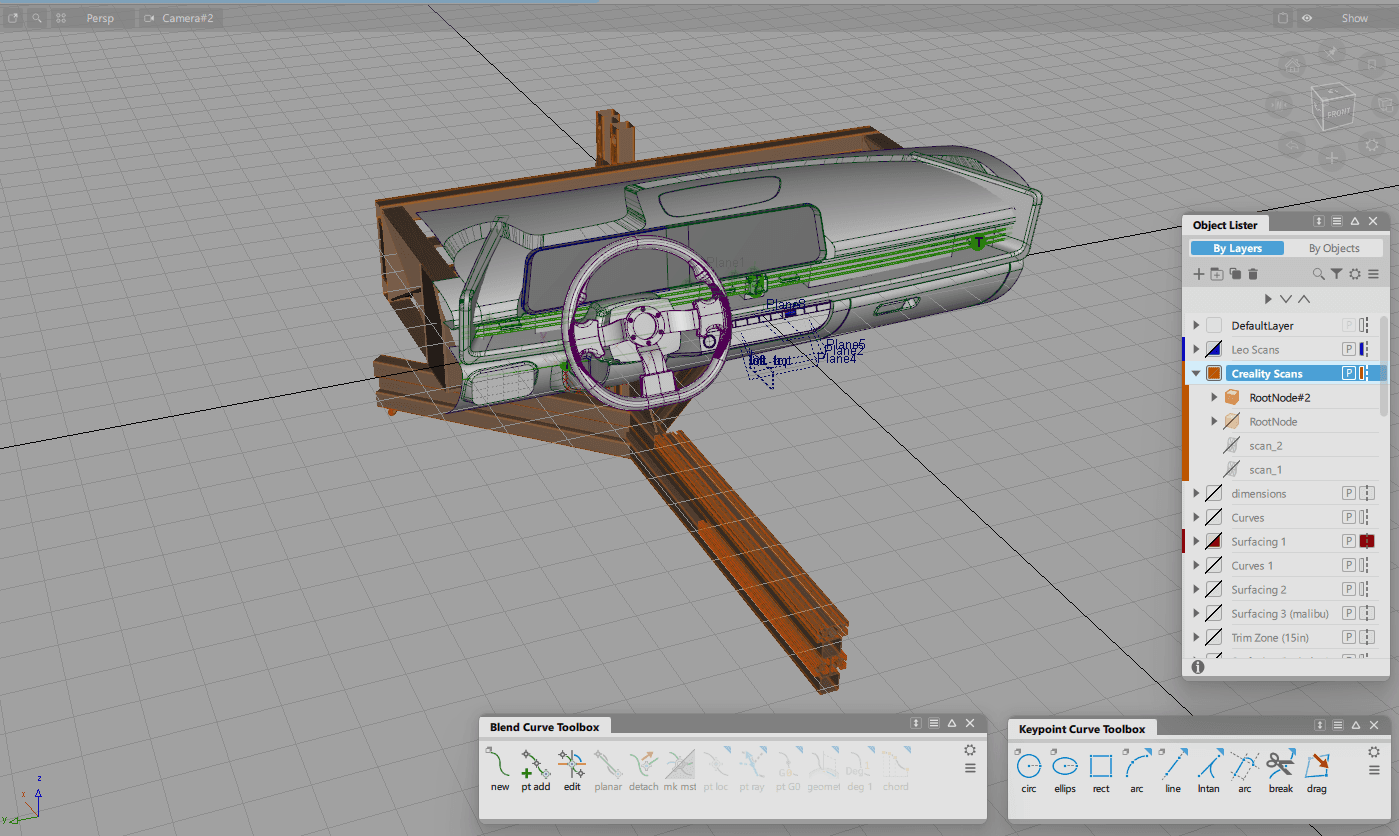

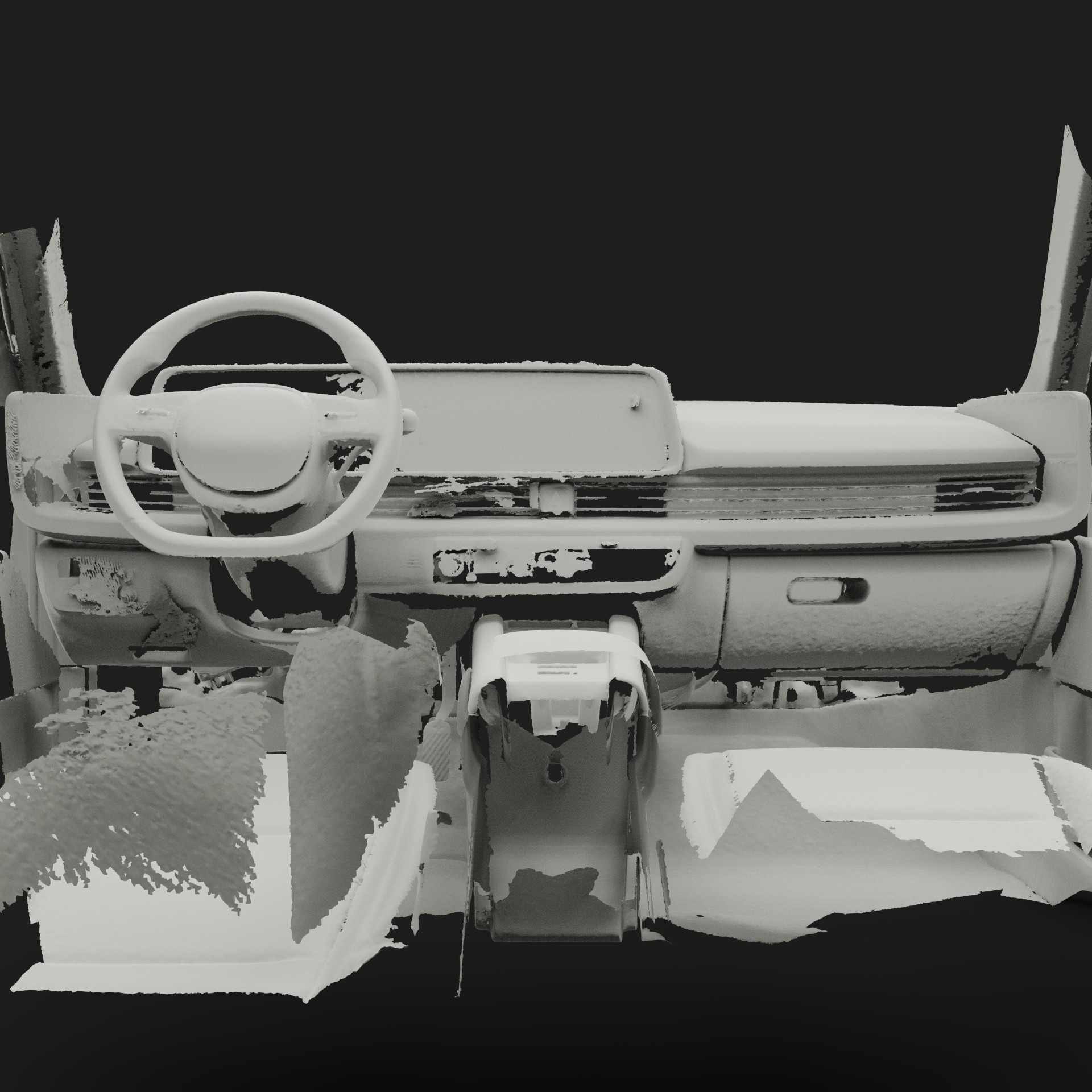

After capturing the interior data of the Ioniq 6 with the Artec Leo 3D scanner, this mesh data became the digital reference that the team would use for the dashboard and all surrounding geometry to work from. The scan captured accurate proportions and spatial relationships, giving us the baseline needed for downstream modeling.

These are a high fidelity design that represents a final product

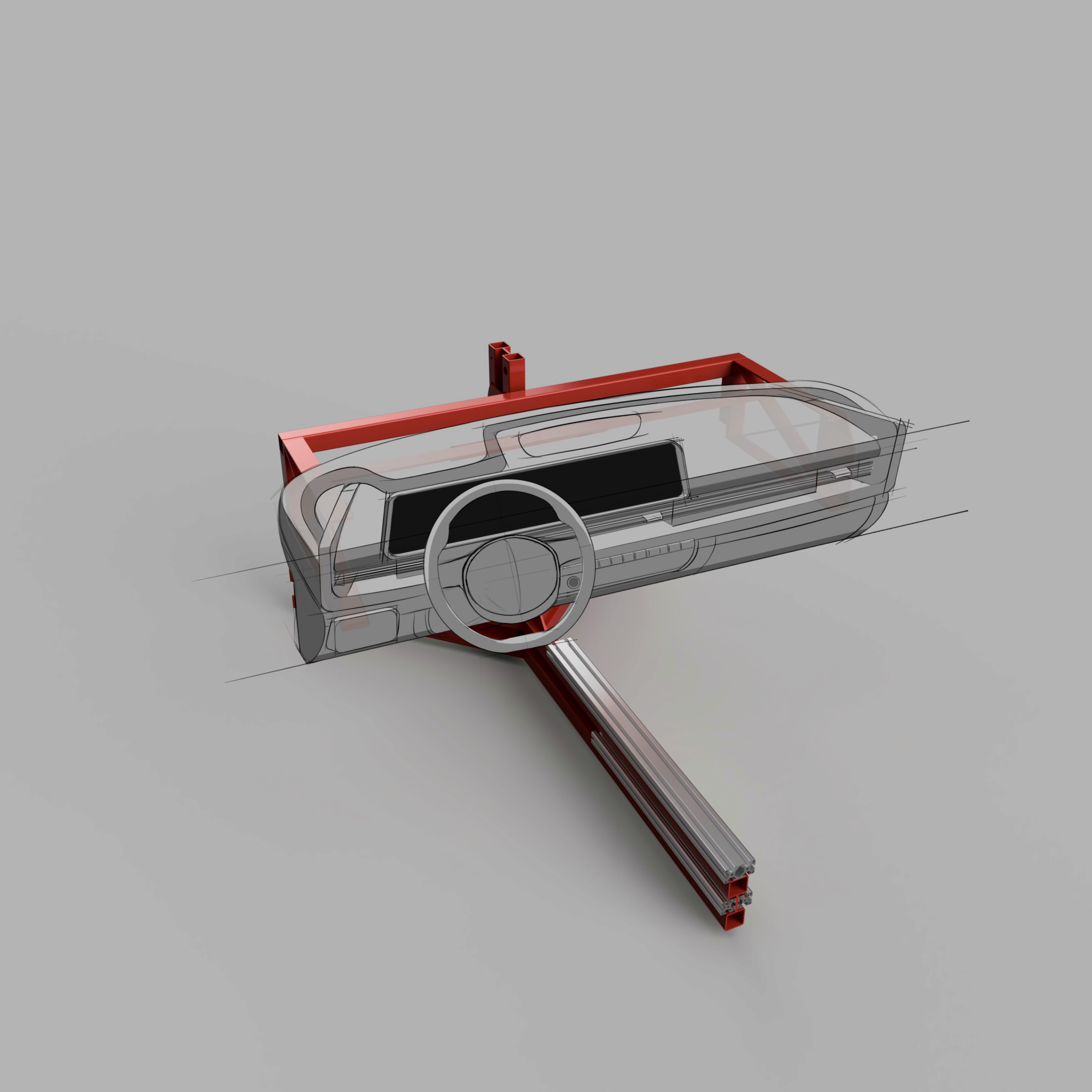

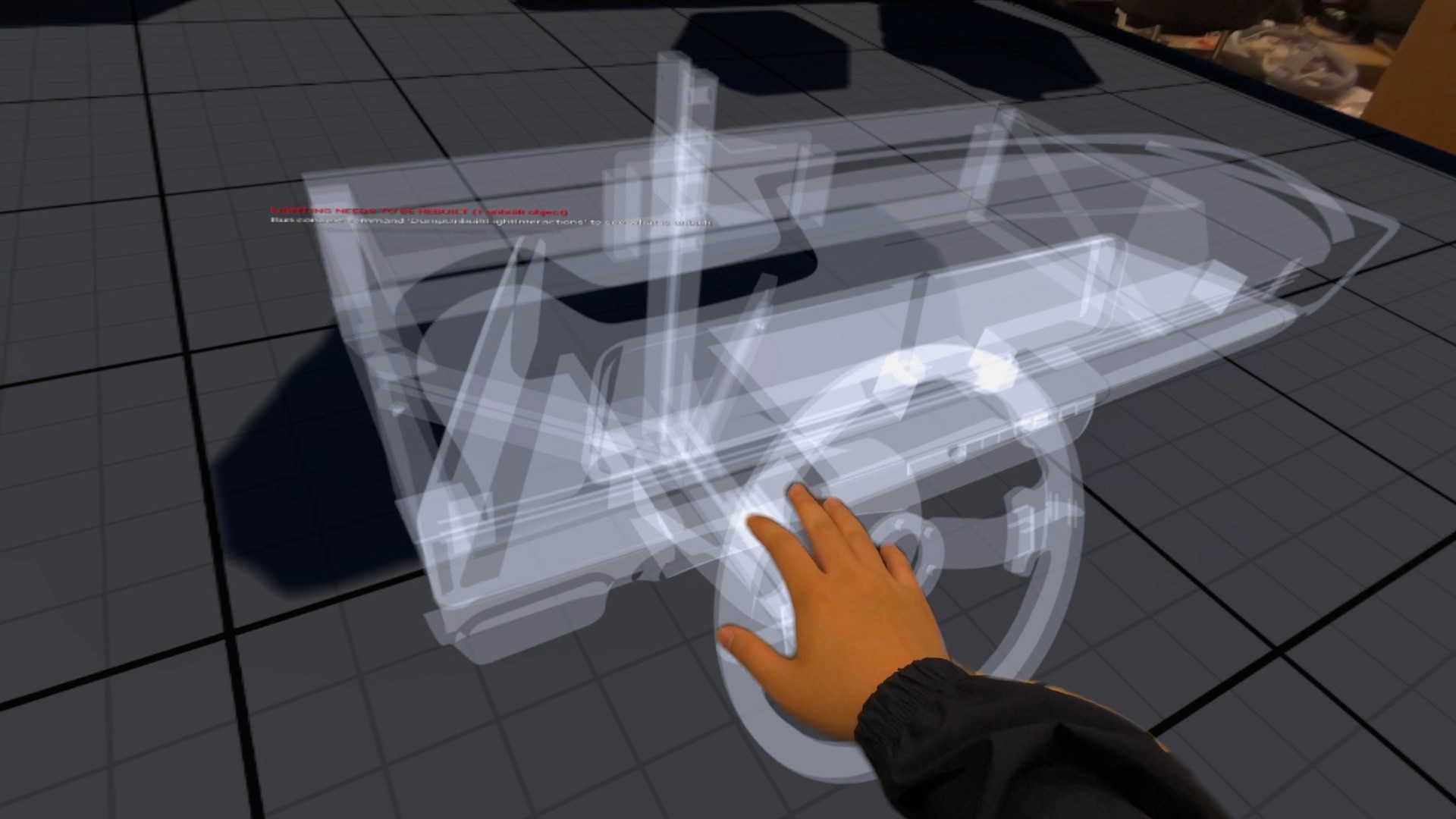

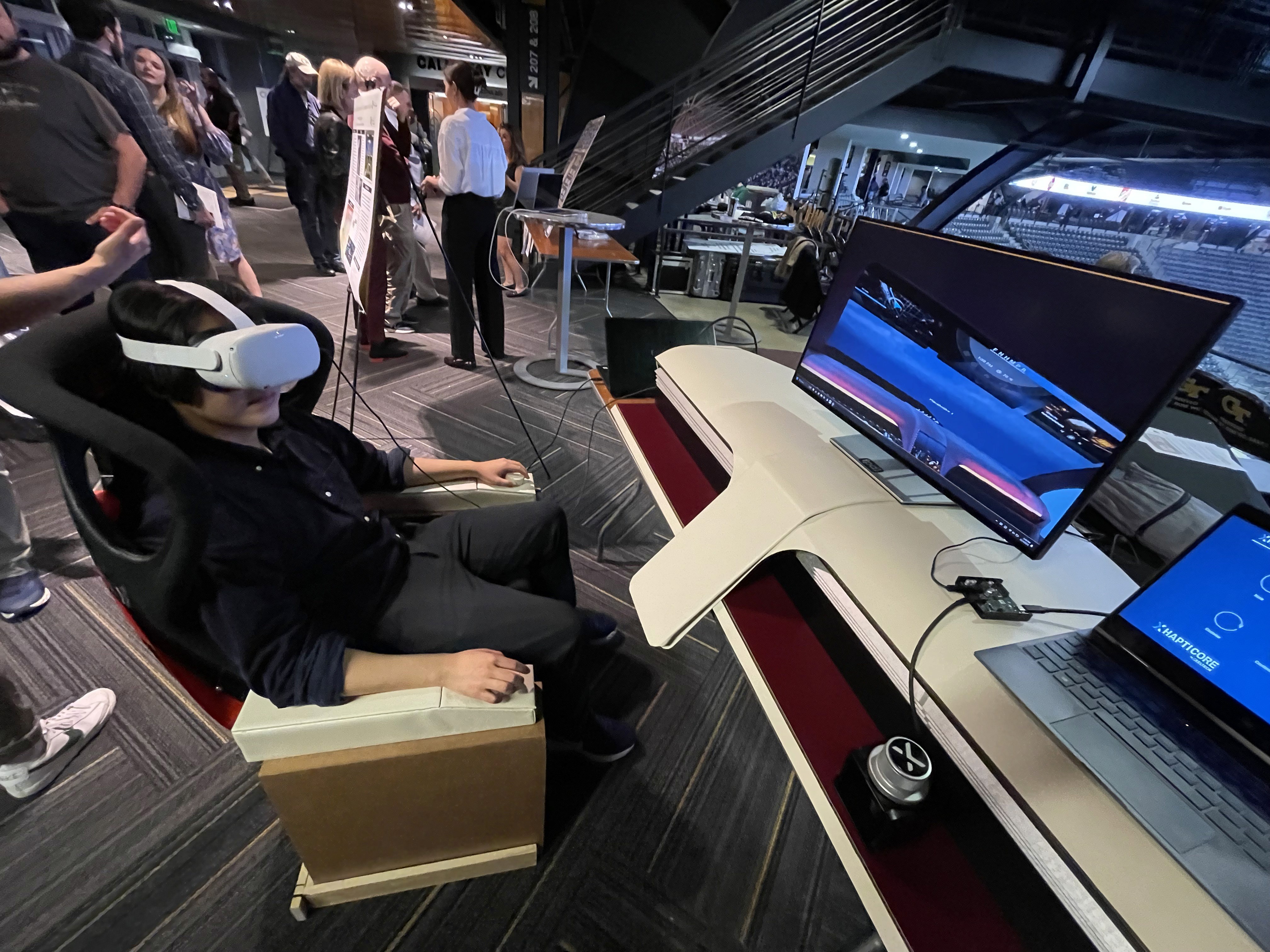

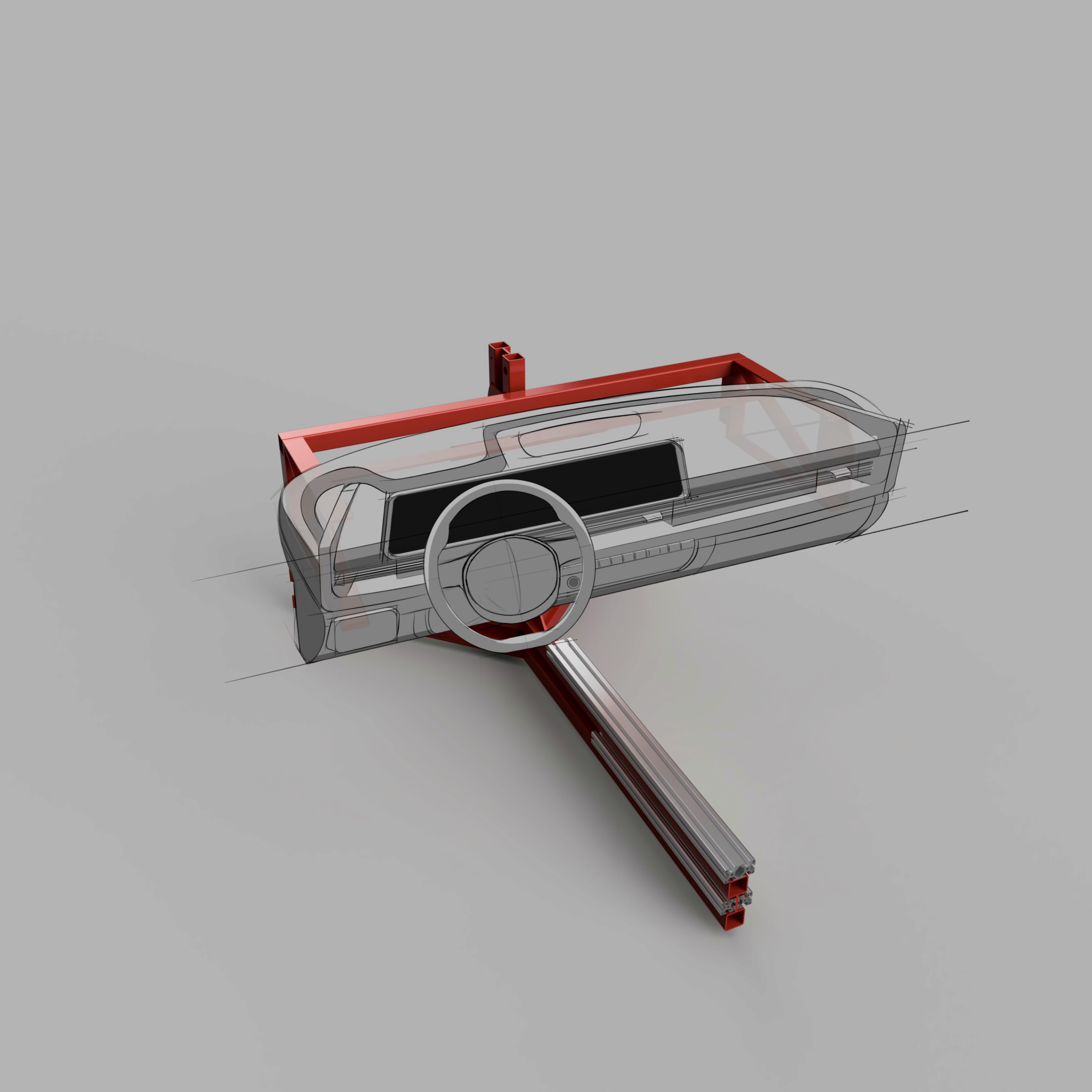

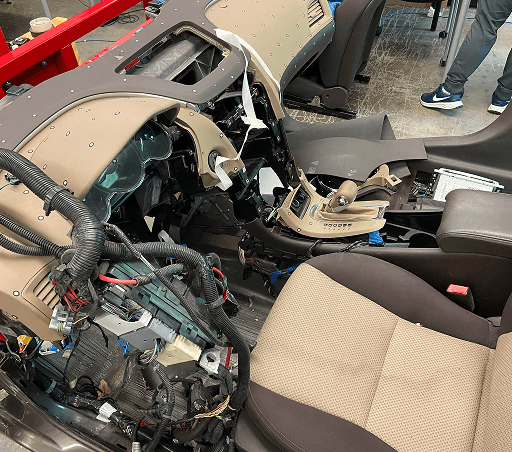

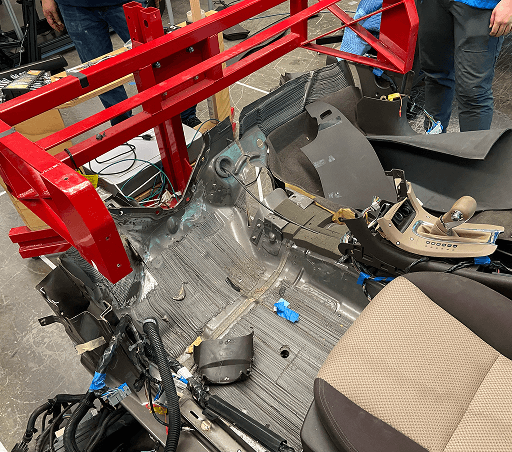

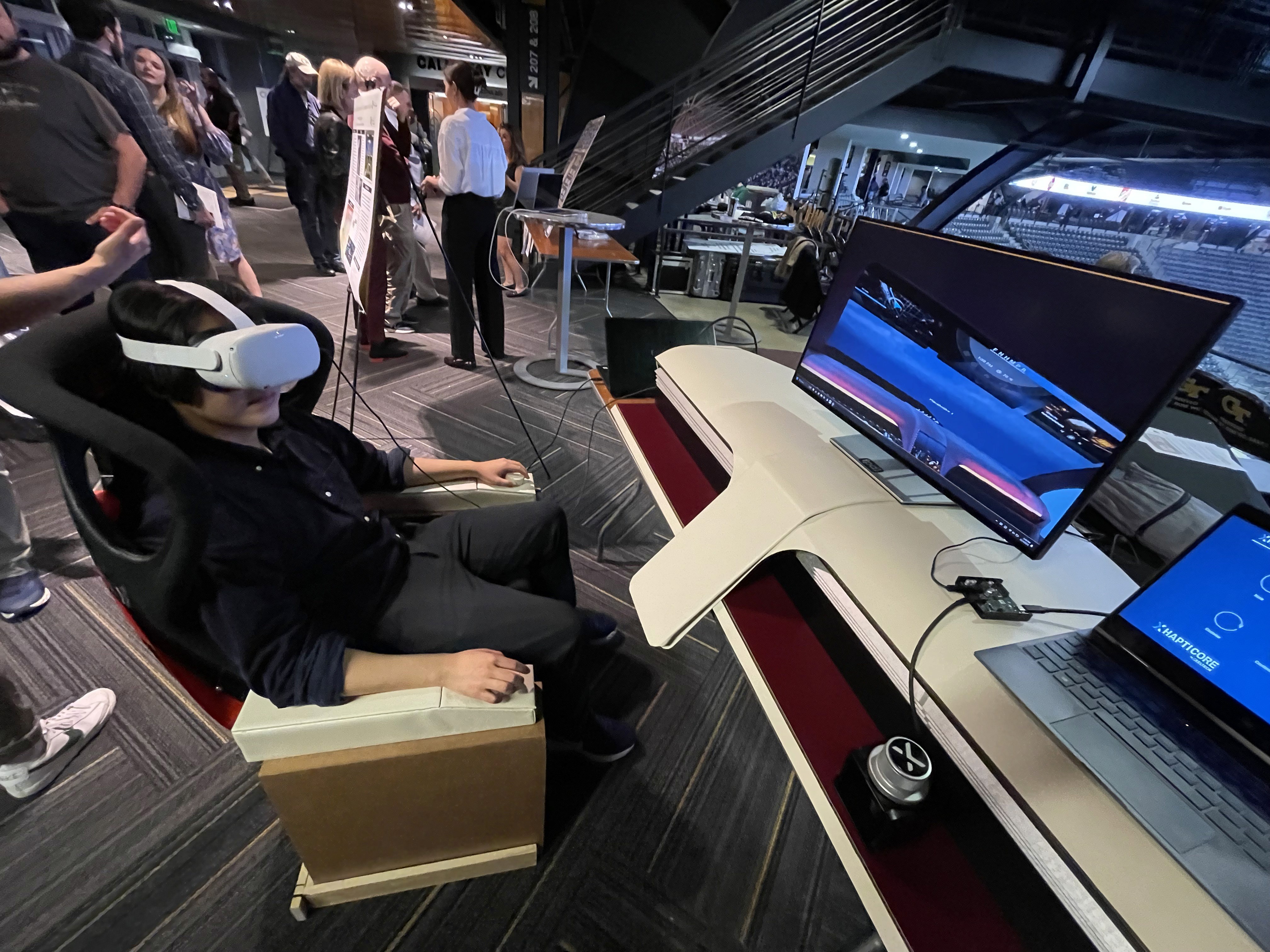

At the start of the project, the team identified the 2023 Hyundai Ioniq 6 at the target platform to test this mixed reality experience.

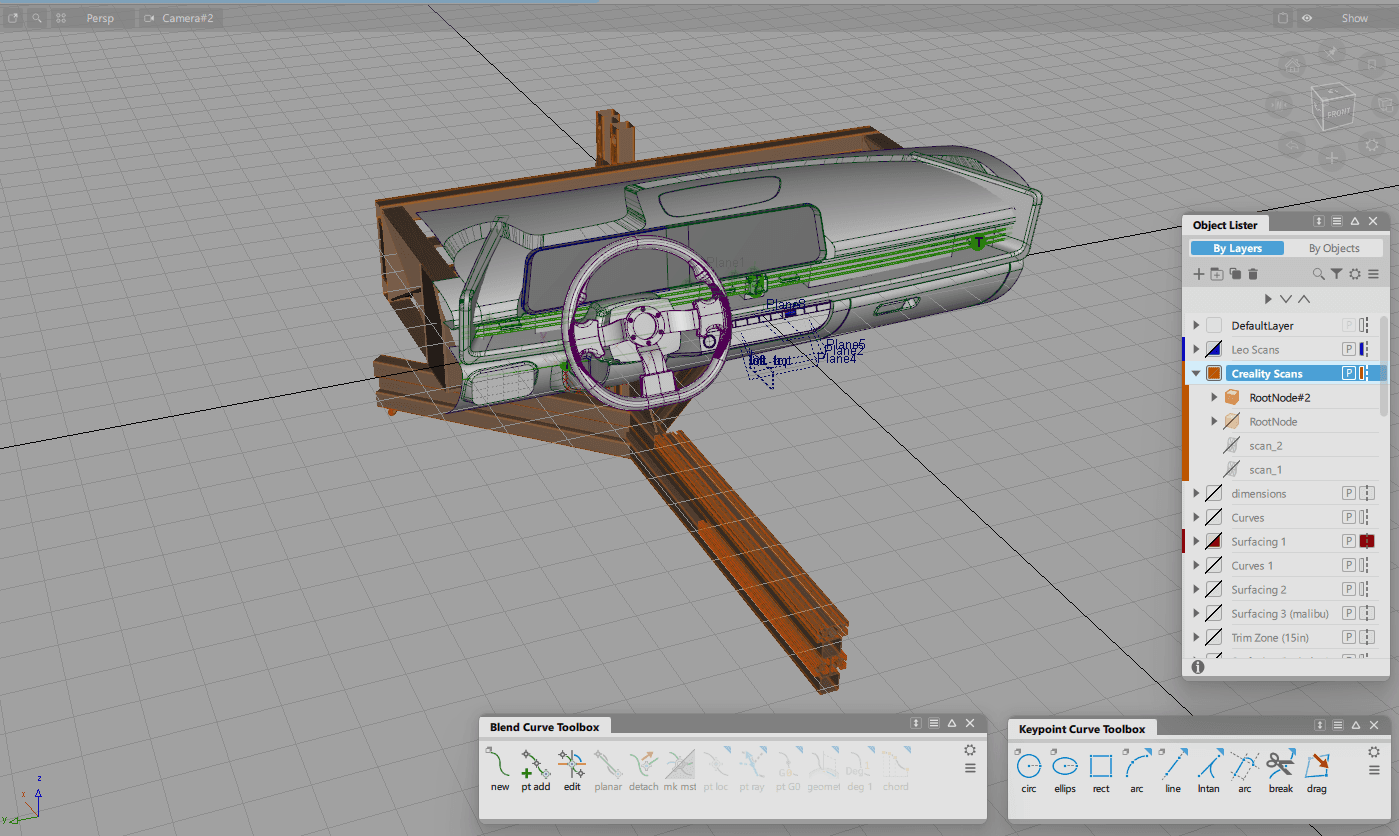

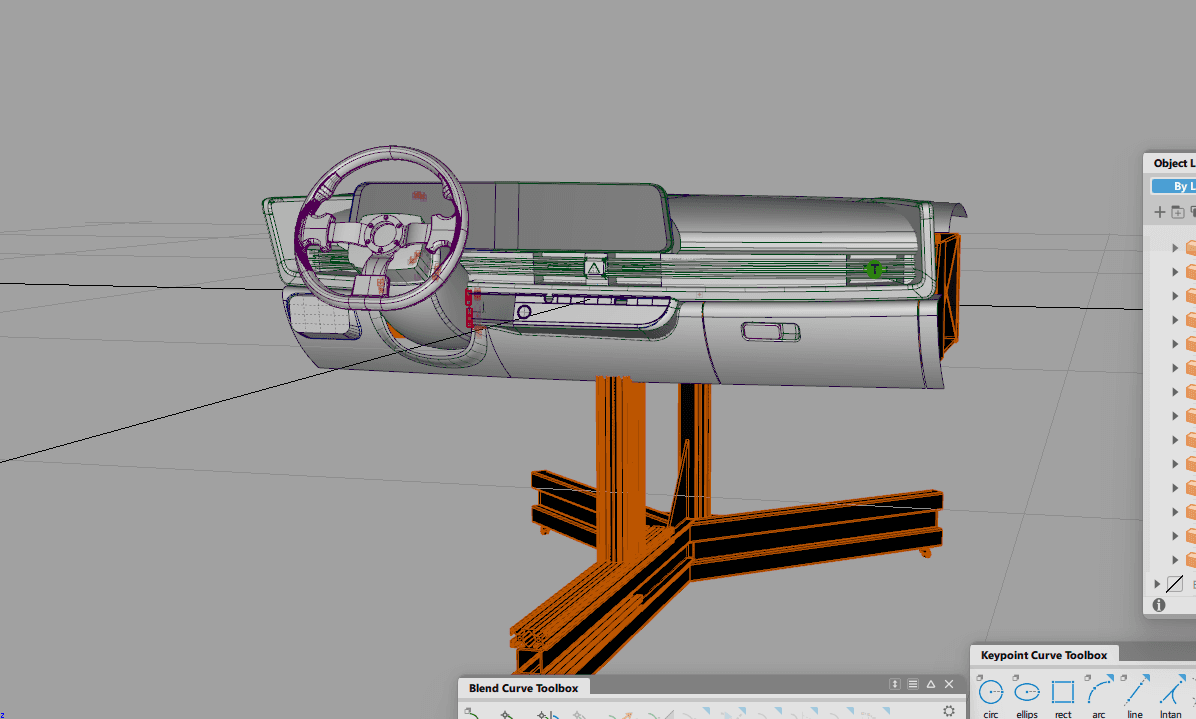

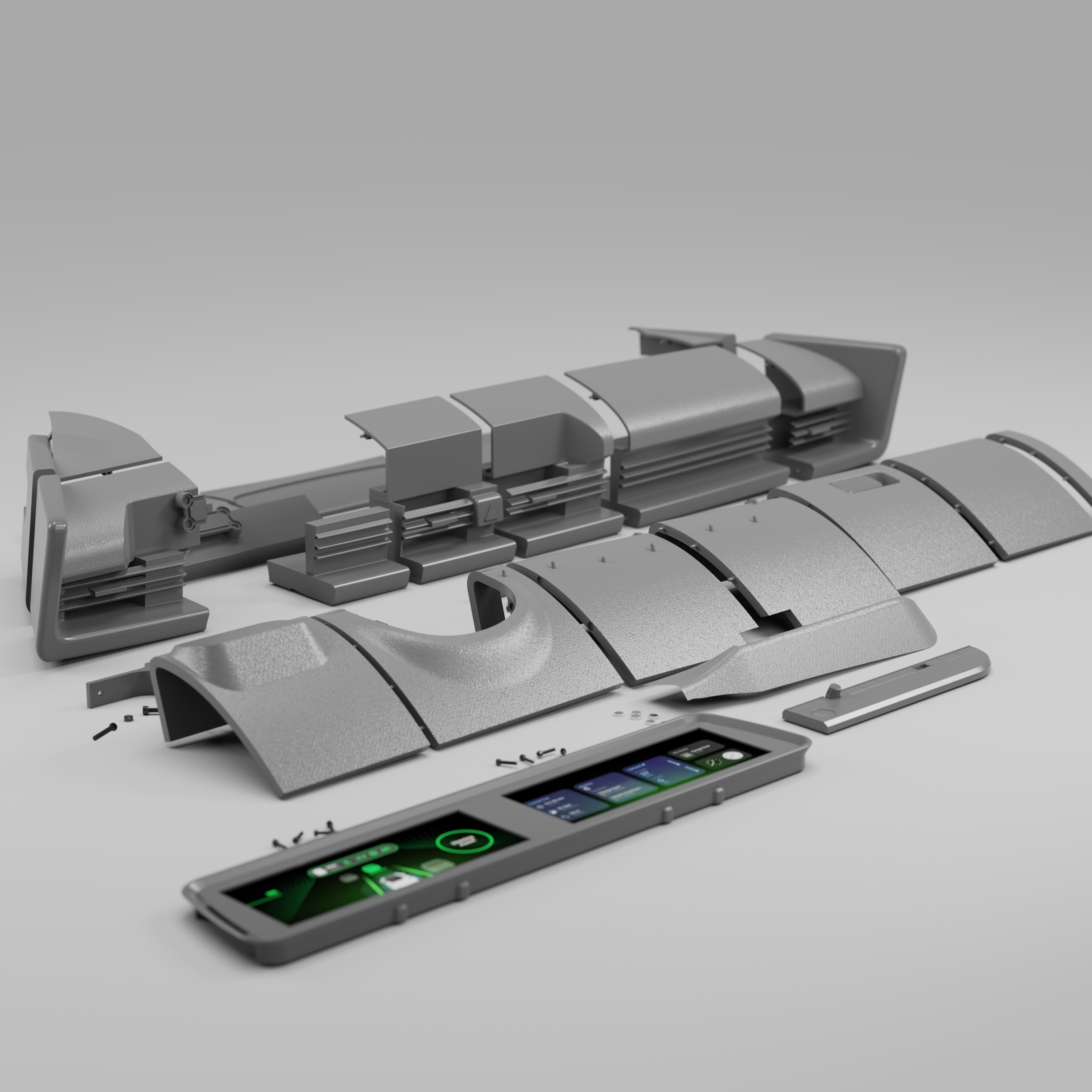

After placing the 3D scan into Autodesk Alias, we using the point data of the scan to guide the resurfacing of the Ioniq 6 interior. This shows the rebuilt surfaces in Alias.

The virtual development environment was created to support the design, testing, and evaluation of spatial interfaces while maintaining alignment with a physical interior dash model. This environment enables the simultaneous use of 3D content, interactive screens, and external development tools, allowing rapid iteration and comparison across platforms. By combining virtual and physical elements, the system serves as a mixed-reality testbed for evaluating usability, interaction methods, and system behavior in an automotive HMI context across different user groups.

These are a high fidelity design that represents a final product

These are a high fidelity design that represents a final product

It's a structured scheme that outlines the pages and content hierarchy of the app.

Apps like your calendar and email lean heavily into the realm of work.

Almost all social media leans into the rest category.

It's a structured scheme that outlines the pages and content hierarchy of the app.

Next, I created a sitemap of the main functions and features of the website. The Search Interface and Partner Portal are largest features with many smaller functions built into each of them.

It's a structured scheme that outlines the pages and content hierarchy of the app.

We first scanned the interior of an Ioniq 6 using an Artec Leo 3D scanner. This shows the raw output of this scan.

They initially oriented on the basic structure of the homepage and highlight the intended function of each element.

The core concept behind FlavorFinder was to shift restaurant discovery from browsing to conversation. Instead of navigating multiple filters, users can simply describe what they are looking for. Example prompts include:

“Find a cozy Italian place nearby”

“Good vegan lunch options”

“Spicy ramen within 10 minutes”

More "clear" version of wireframes in a digital form. Also all the important pages are added

in it.

Then, I explored different layouts for the chat conversation and search results. All wireframes were built out using Figma.

This is an examination of users and their needs, which adds realistic context to the design process.

Early usability testing was conducted with a small group of participants to evaluate how easily users could understand and use the conversational search interface.

Participants were asked to complete a simple task: find a restaurant recommendation using the chat feature.

In the beginning, before choosing a city and theater, it would be great to look through the whole app and learn everything about it.

There are no movie search - it's necessary to add it on the movies list page.

If user wants to change his account, he should be able to log out or delete it completely.

The clear version :

The refinement stage focused on improving visual hierarchy, clarifying the chat interaction, and optimizing the restaurant recommendation display.

Design iterations emphasized simplicity while maintaining clear access to supporting restaurant information.

It's a structured scheme that outlines the pages and content hierarchy of the app.

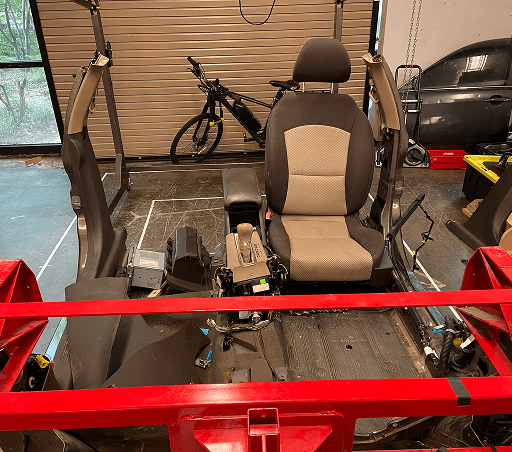

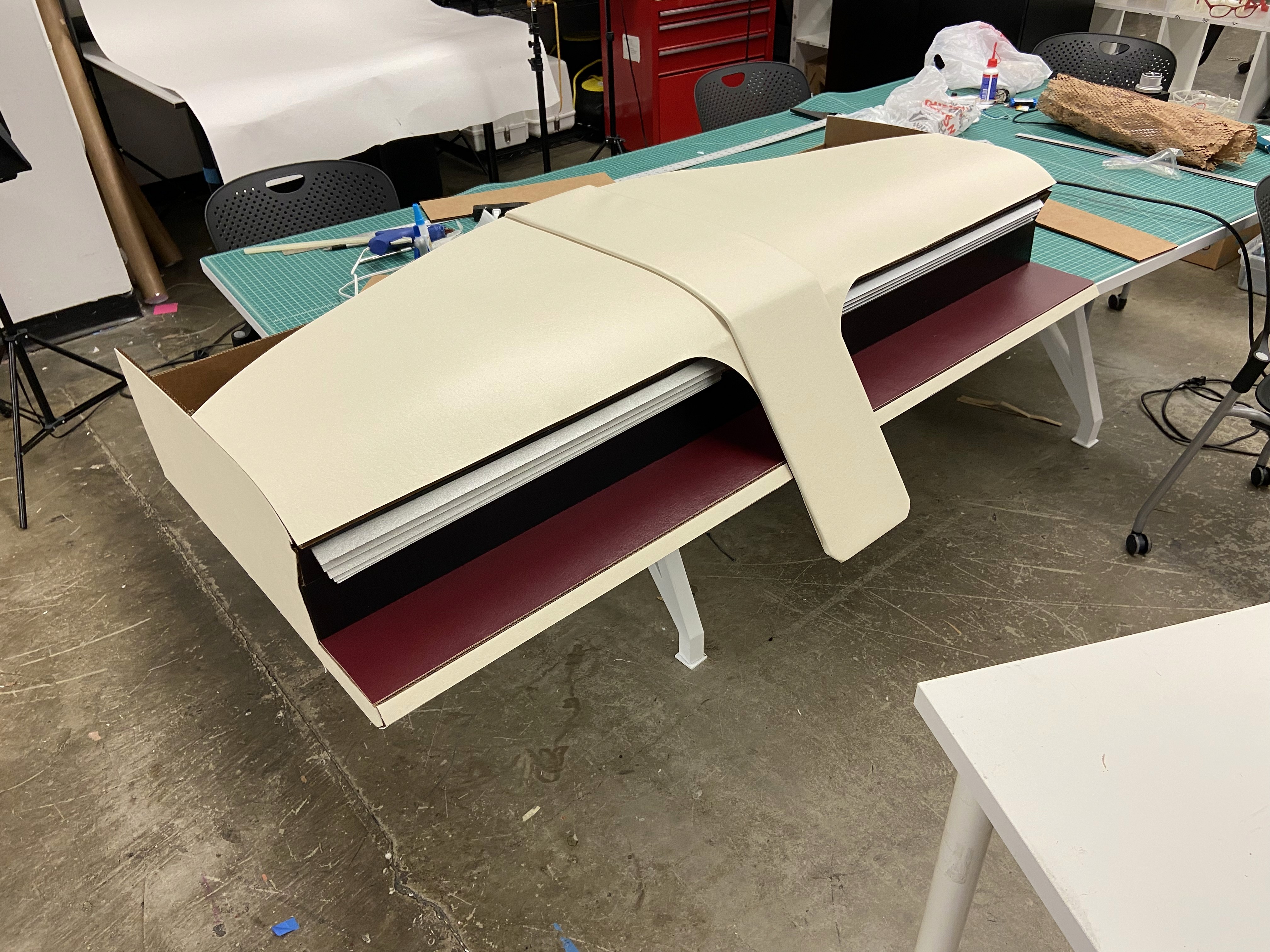

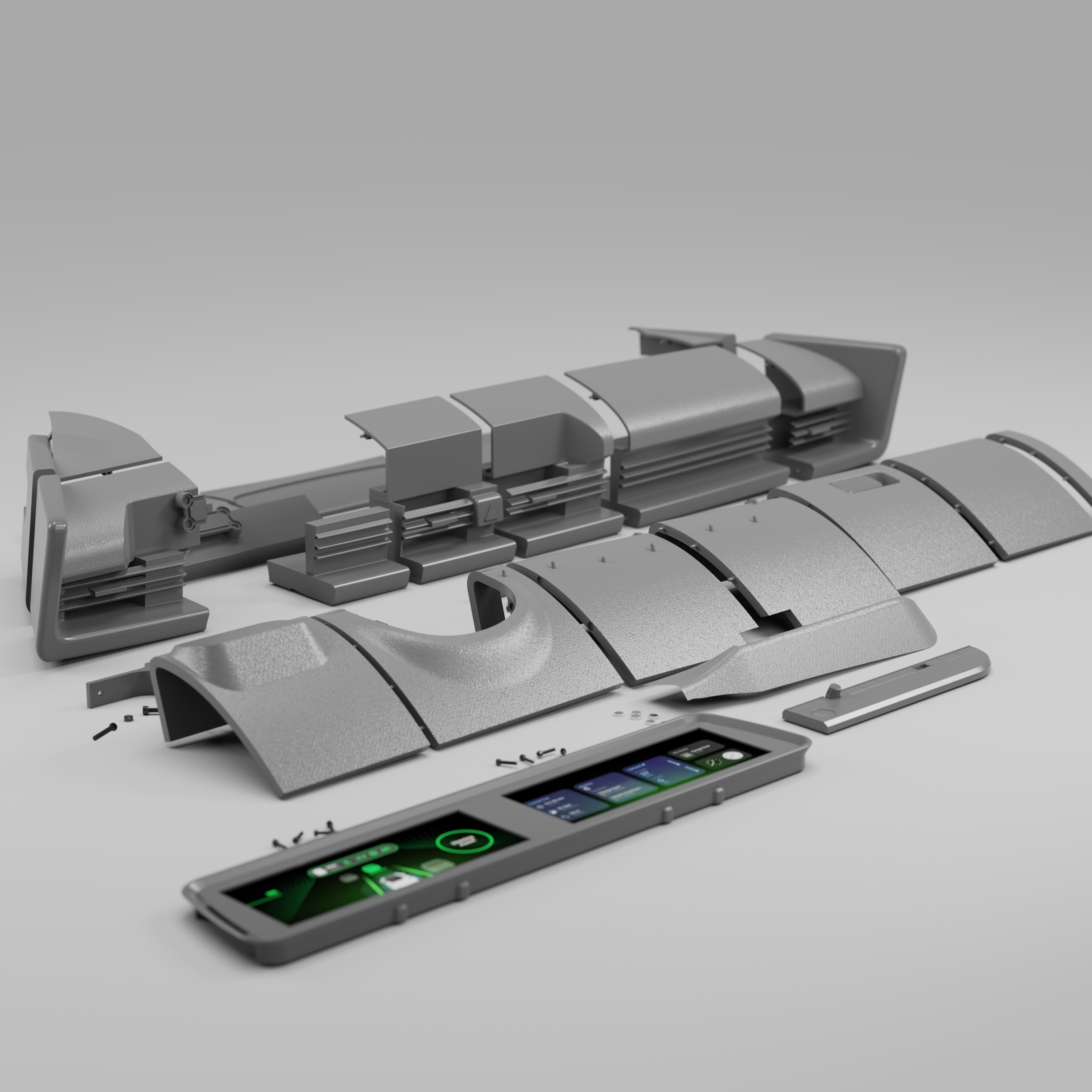

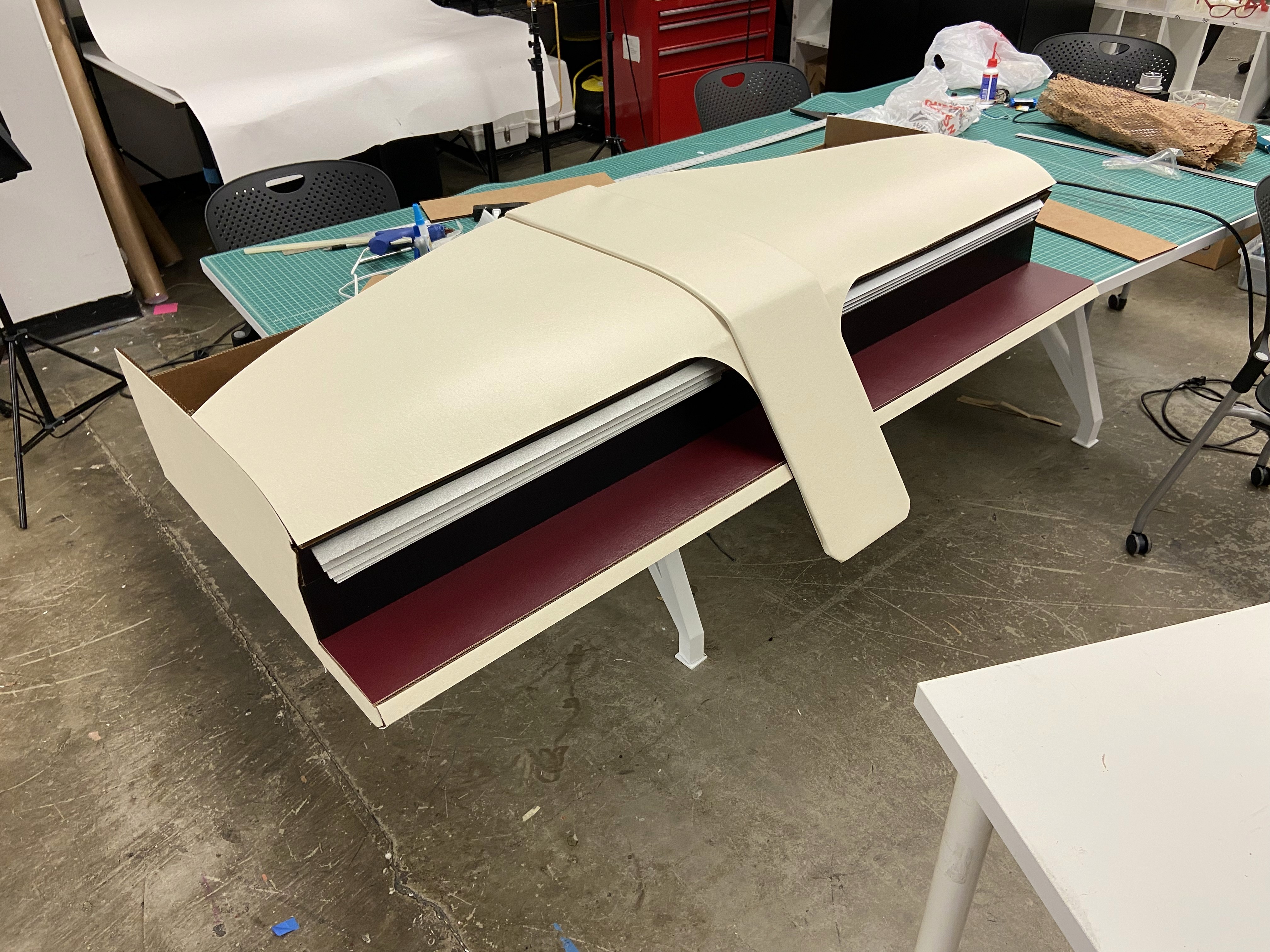

With the dashboard surfaces finalized in Alias, the next step was preparing the model for 3D printing. To accomplish this, we transitioned the design into Fusion 360, where we added material thickness, converted surfaces into solids, and introduced breakpoints to segment the dashboard based on the Formlabs Form 4L printer bed dimensions (13.9 x 7.7 x 13.8 inches).

It's a structured scheme that outlines the pages and content hierarchy of the app.

After all parts on the top half of the dash were attached together, light spackle was used to fill the seams created where parts met. This meant that now the top of the dash was one full piece. The model now fits in the lab’s buck as a 1:1 representation of a 2023 Hyundai Ioniq 6 dashboard.

These are a high fidelity design that represents a final product

Assembling the model after printing out all the parts was simple. A lot of time and effort went into planning the B side of the parts so that slotting and gluing the parts together was as smooth as possible.

We were able to determine three zone of legibility when testing text elements on the HUD. From here we started creating an information hierarchy based on these zones to identify what should be displayed where.

When testing, users reported eye fatigue when there were large blocks of bright colors on the HUD. We pivoted to muted/ transparent elements in later designs.

We were able to determine three zone of legibility when testing text elements on the HUD. From here we started creating an information hierarchy based on these zones to identify what should be displayed where.

When testing, users reported eye fatigue when there were large blocks of bright colors on the HUD. We pivoted to muted/ transparent elements in later designs.

These are a high fidelity design that represents a final product

High fidelity wireframes introduced the final visual language of the platform, including typography, spacing, and visual hierarchy. These screens refined the layout structure while ensuring the conversational interface remained the primary entry point for discovery.

This is the primary user flow that was tested on my prototypes. The flow involves a user starting on the FlavorFinder homepage and interacting with the AI-powered chat to ultimately decide where they want to eat.

It's the detailed, interactive version of designs that closely match the look and feel of the final product.

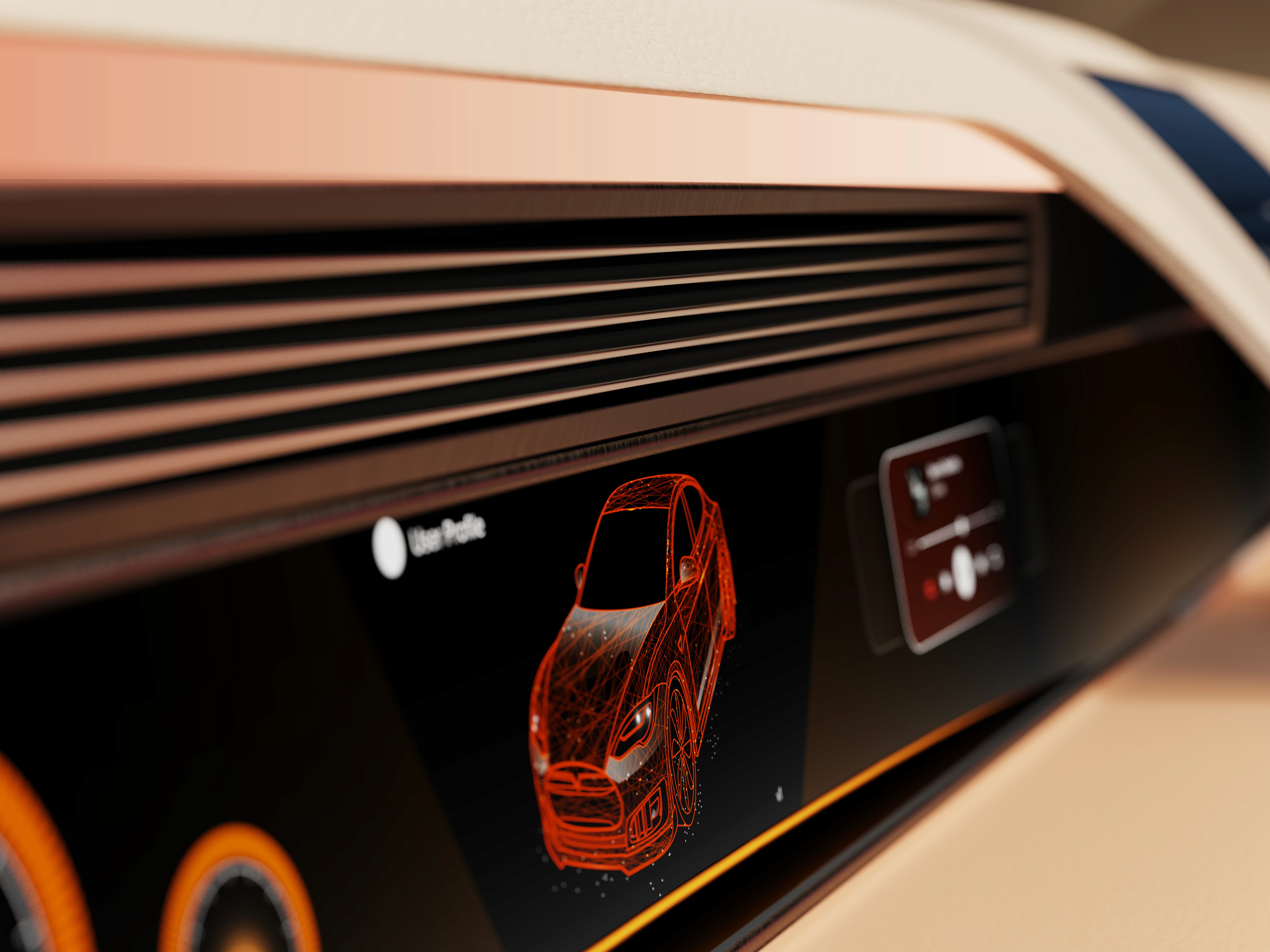

The center stack display and auxiliary displays carry most of the information related to the vehicle itself. They work in tandem with the HUD since the information being displayed on the HUD will either be work or social/ entertainment related.

All of these screens were fully built out in Figma.

HUD and UI Settings

Environment Settings

Home (General Vehicle Settings)

Map Settings

Multimedia Settings

It's the detailed, interactive version of designs that closely match the look and feel of the final product.

A clickable prototype was developed to simulate the full conversational search experience. The prototype demonstrates how users interact with the chat system, review recommendations, and navigate restaurant details.

City and cinema theater selection

Movies slideshow

List of movies + search option

Separate movie page, adding to favourites

Show selection: date and time, hall and seats

Adding selected seats

Calendar with results filtering

Menu and its sections

The project schematically :

The final outcome is a high fidelity web application with API functions built into the backend to simulate AI conversation with restaurant searching.

It's a structured scheme that outlines the pages and content hierarchy of the app.

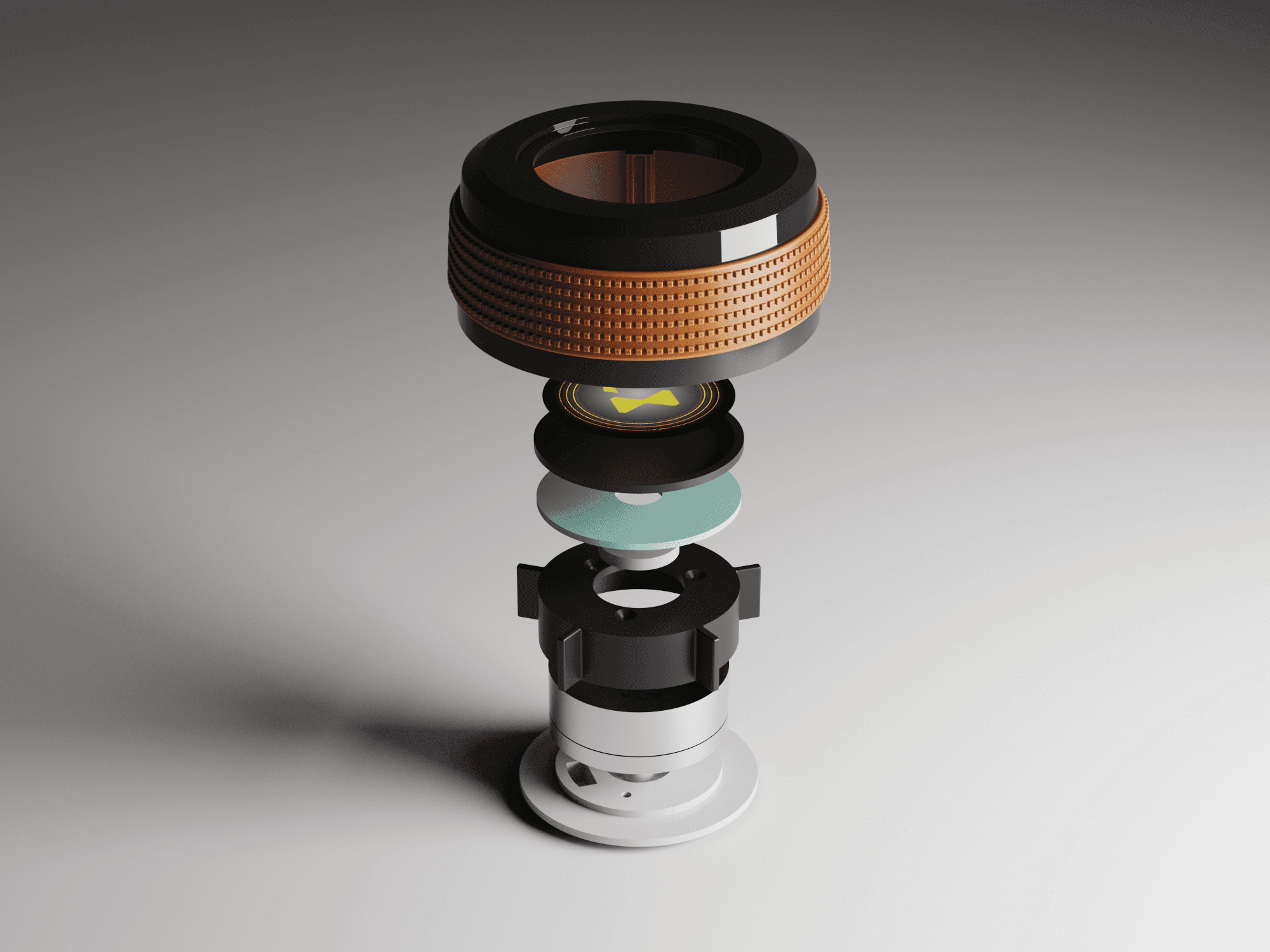

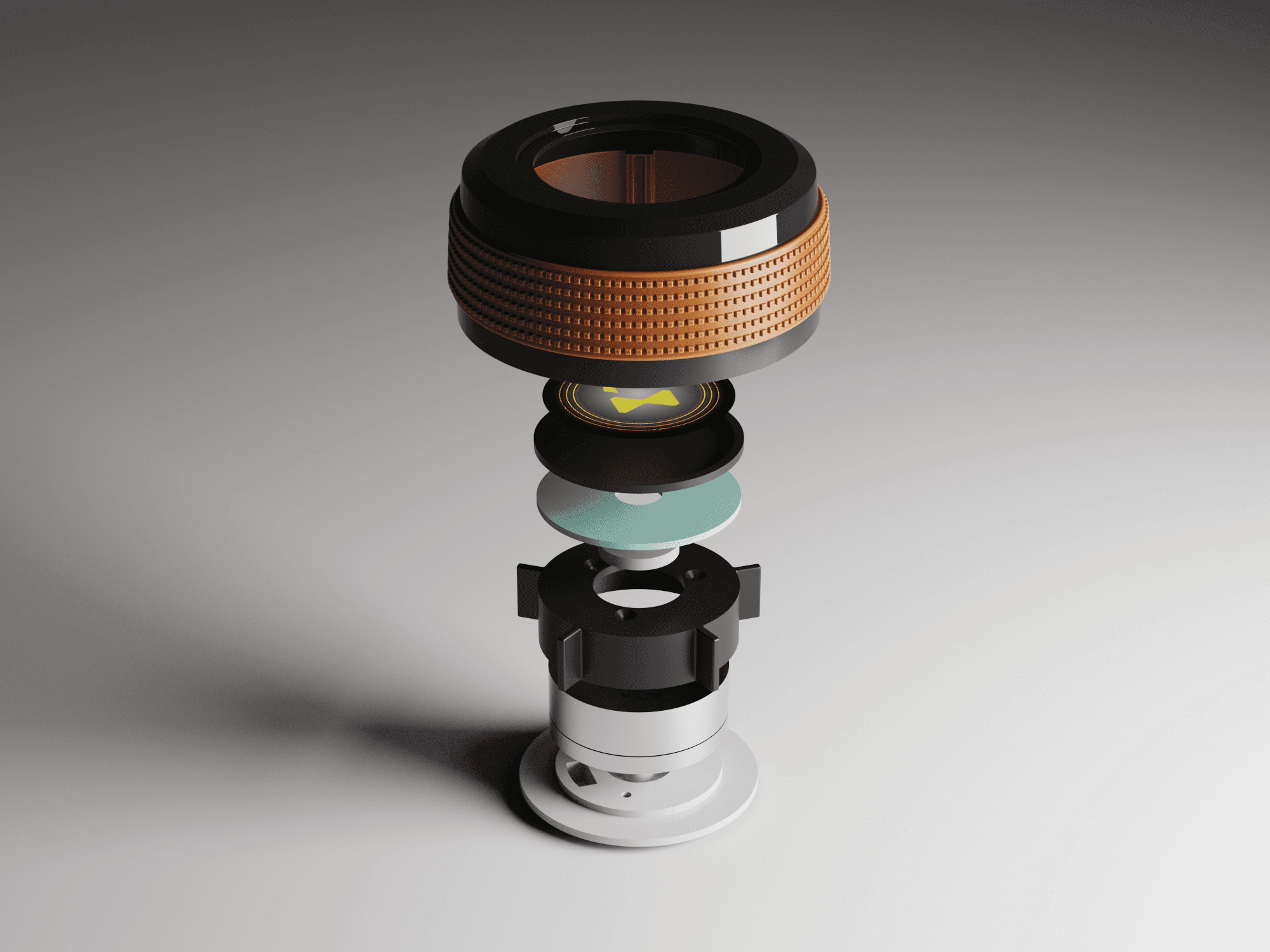

The new and improved haptic knob was built completely in-house and allowed us to customize the force feedback of the knob based on certain scenarios. This means as a user steps through the UI experience, the knob can be programmed to feel different at each step of the UI.

It's a structured scheme that outlines the pages and content hierarchy of the app.

The final HUD has work (orange) and rest (blue) modes to account for whether the user is actively or passively engaging with the UI. This split perspective shows a difference in information density between the two different modes on the HUD

This project demonstrated how conversational interfaces can simplify everyday decision-making processes such as restaurant discovery.

The platform concept improves restaurant discovery efficiency while supporting local businesses through enhanced visibility and menu-driven recommendations.

This project reinforced the importance of designing AI-powered interactions that remain intuitive and approachable for users.

Future work for this app include fully building out the database on the backend to hold restaurant information and tie in necessary APIs.

Conduct additional testing to refine conversational interactions

Explore group decision-making features for shared restaurant searches

Client

N/A

Year

2025

Tag

UI/UX

Duration

Ongoing

The project itself :

FlavorFinder is a web-based restaurant discovery platform that simplifies the process of finding places to eat through conversational search. Instead of scrolling through long lists of restaurants, users can describe what they are craving and receive personalized recommendations powered by AI.

The platform also supports smaller restaurants by partnering with them to provide richer menu data and increased visibility.

Restaurant discovery platforms often overwhelm users with too many options and rigid filtering systems, making it difficult to quickly decide where to eat.

Design a conversational discovery platform that simplifies restaurant search while helping smaller restaurants gain visibility through richer menu data and featured placement.

UX/UI Designer responsible for product concept, user research synthesis, interaction design, and interface design.

Defined the product concept and discovery workflow

Designed conversational search interactions

Developed wireframes and high-fidelity interface designs

Created the information architecture and user flows

iterating on designs,

making high-fidelity prototype

All about the user :

Research focused on understanding how users currently search for restaurants and where friction occurs in the discovery process. Users frequently experience decision fatigue when browsing long lists of options and struggle to filter results based on nuanced preferences.

These insights highlighted an opportunity to simplify discovery through conversational interactions.

Users experience decision fatigue when browsing large restaurant directories

Traditional filters do not capture nuanced cravings or dietary preferences

Smaller restaurants struggle to gain visibility on large platforms

A slight majority of surveyors would like to rest in a driverless car, but getting additional work done was a close second.

Visibility to the road is important to surveyors, so the HUD should not clutter their view.

Commute time often feels underutilized or disconnected from passengers’ goals

The platform primarily serves two user groups: diners looking for faster restaurant discovery and small restaurant owners seeking increased visibility. Users often search for restaurants in group settings where multiple preferences must be considered.

At the start of our research, we listed out possible stakeholders and mapped them out based on their importance and influence to the project and relative to themselves. We made sure to note where the vulnerable populations would be, but since this project is so closely tied with the experience on the interior of the vehicle, we stayed focused on just the driver and our sponsor.

After figuring out our user group, we created a survey for Gen-Z commuters to help draw out insights that might lead to design opportunities. This survey was equipped with two different types of questions:

Current driving questions

Future thinking, Level 5 questions

51% of Americans ages 18-29 say they would ride in a driverless vehicle if they had the opportunity. So, now we can narrow our user group further to Gen-Z commuters.

Replace complex filtering with conversational search

Highlight small or local restaurants through featured partnerships

Use menu-level data to generate better recommendations

It is the series of experiences Carlos has as he achieve a specific goal. It was built on the his experience.

I developed a user journey map of a typical user's experience with searching for food to pinpoint potential pain points and identify areas for improvement.

The ideal journey begins with a user describing what they want to eat through conversational search. The system interprets their request and returns tailored recommendations that help users quickly decide where to eat.

In the beginning, before choosing a city and theater, it would be great to look through the whole app and learn everything about it.

There are no movie search - it's necessary to add it on the movies list page.

If user wants to change his account, he should be able to log out or delete it completely.

This is an examination of users and their needs, which adds realistic context to the design process.

First I conducted unmoderated usability studies with a few participants: they had to answer different questions about the app and share their observations while using the initial low-fi prototype. After getting the data, I analyzed it and synthesized the information obtained. Finally, I found themes and came up with several insights.

The goal was to identify pain points that the user experiences with the app designs so the issues can be fixed before the final product launches.

A slight majority of surveyors would like to rest in a driverless car, but getting additional work done was a close second.

Visibility to the road is important to surveyors, so the HUD should not clutter their view.

If user wants to change his account, he should be able to log out or delete it completely.

It is the series of experiences Carlos has as he achieve a specific goal. It was built on the his experience.

FlavorFinder transforms restaurant discovery from a frustrating browsing experience into a guided conversation. By combining AI search with detailed restaurant data, the platform helps users find food faster while increasing visibility for local businesses.

A fully autonomous vehicle can give you the best of both worlds. You can have your own personal space while the car does all the driving for you.

The question then becomes: What do you do with the time you spend riding in this vehicle?

Accurate alignment between physical and digital environments was critical to the success of this system. Scan data required careful reconstruction to preserve production vehicle proportions while meeting fabrication and simulator constraints. Considerations such as driver eye point, seating position, reach zones, and mounting tolerances informed how the digital model was engineered and how physical components were integrated, reinforcing the need for a tightly coupled physical and digital reference.

I developed a user journey map of Carlos's experience with the app to highlight potential pain points and identify areas for improvement.

Choose a good movie in a cinema theatre nearby and select seats in an app in a fast and clear way

The project schematically :

Early design exploration focused on translating conversational search into a clear interface structure. The challenge was to balance the AI chat interaction with familiar browsing elements so users still felt in control of the discovery process.

Concept sketches explored different homepage layouts and search entry points.

These are a high fidelity design that represents a final product

At the start of the project, the team identified the 2023 Hyundai Ioniq 6 at the target platform to test this mixed reality experience.

We were able to determine three zone of legibility when testing text elements on the HUD. From here we started creating an information hierarchy based on these zones to identify what should be displayed where.

When testing, users reported eye fatigue when there were large blocks of bright colors on the HUD. We pivoted to muted/ transparent elements in later designs.

After capturing the interior data of the Ioniq 6 with the Artec Leo 3D scanner, this mesh data became the digital reference that the team would use for the dashboard and all surrounding geometry to work from. The scan captured accurate proportions and spatial relationships, giving us the baseline needed for downstream modeling.

These are a high fidelity design that represents a final product

After placing the 3D scan into Autodesk Alias, we using the point data of the scan to guide the resurfacing of the Ioniq 6 interior. This shows the rebuilt surfaces in Alias.

The virtual development environment was created to support the design, testing, and evaluation of spatial interfaces while maintaining alignment with a physical interior dash model. This environment enables the simultaneous use of 3D content, interactive screens, and external development tools, allowing rapid iteration and comparison across platforms. By combining virtual and physical elements, the system serves as a mixed-reality testbed for evaluating usability, interaction methods, and system behavior in an automotive HMI context across different user groups.

The series of hand-drawing frames that visually describe and explore a user's experience with a product.

I began with drawing storyboards to focus on just the most important parts of a user’s experience with the app. It's a story showing a group a friends trying to figure out where they should go eat, and ultimately using FlavorFinder to get detailed recommendations on a nice local restaurant.

Big picture storyboard, which focuses on the user experience. It's about how people will use the Voo's app during their day and it will be useful.

Close-up storyboard focuses on the app instead of on the user experiencing that product. Shows what happens on each screen of the app.

These are a high fidelity design that represents a final product

It's a structured scheme that outlines the pages and content hierarchy of the app.

It's a structured scheme that outlines the pages and content hierarchy of the app.

Next, I created a sitemap of the main functions and features of the website. The Search Interface and Partner Portal are largest features with many smaller functions built into each of them.

It's a structured scheme that outlines the pages and content hierarchy of the app.

We first scanned the interior of an Ioniq 6 using an Artec Leo 3D scanner. This shows the raw output of this scan.

They initially oriented on the basic structure of the homepage and highlight the intended function of each element.

The core concept behind FlavorFinder was to shift restaurant discovery from browsing to conversation. Instead of navigating multiple filters, users can simply describe what they are looking for. Example prompts include:

“Find a cozy Italian place nearby”

“Good vegan lunch options”

“Spicy ramen within 10 minutes”

More "clear" version of wireframes in a digital form. Also all the important pages are added

in it.

Then, I explored different layouts for the chat conversation and search results. All wireframes were built out using Figma.

This is an examination of users and their needs, which adds realistic context to the design process.

Early usability testing was conducted with a small group of participants to evaluate how easily users could understand and use the conversational search interface.

Participants were asked to complete a simple task: find a restaurant recommendation using the chat feature.

In the beginning, before choosing a city and theater, it would be great to look through the whole app and learn everything about it.

There are no movie search - it's necessary to add it on the movies list page.

If user wants to change his account, he should be able to log out or delete it completely.

The clear version :

The refinement stage focused on improving visual hierarchy, clarifying the chat interaction, and optimizing the restaurant recommendation display.

Design iterations emphasized simplicity while maintaining clear access to supporting restaurant information.

It's a structured scheme that outlines the pages and content hierarchy of the app.

With the dashboard surfaces finalized in Alias, the next step was preparing the model for 3D printing. To accomplish this, we transitioned the design into Fusion 360, where we added material thickness, converted surfaces into solids, and introduced breakpoints to segment the dashboard based on the Formlabs Form 4L printer bed dimensions (13.9 x 7.7 x 13.8 inches).

It's a structured scheme that outlines the pages and content hierarchy of the app.

After all parts on the top half of the dash were attached together, light spackle was used to fill the seams created where parts met. This meant that now the top of the dash was one full piece. The model now fits in the lab’s buck as a 1:1 representation of a 2023 Hyundai Ioniq 6 dashboard.

These are a high fidelity design that represents a final product

Assembling the model after printing out all the parts was simple. A lot of time and effort went into planning the B side of the parts so that slotting and gluing the parts together was as smooth as possible.

We were able to determine three zone of legibility when testing text elements on the HUD. From here we started creating an information hierarchy based on these zones to identify what should be displayed where.

We were able to determine three zone of legibility when testing text elements on the HUD. From here we started creating an information hierarchy based on these zones to identify what should be displayed where.

These are a high fidelity design that represents a final product

High fidelity wireframes introduced the final visual language of the platform, including typography, spacing, and visual hierarchy. These screens refined the layout structure while ensuring the conversational interface remained the primary entry point for discovery.

This is the primary user flow that was tested on my prototypes. The flow involves a user starting on the FlavorFinder homepage and interacting with the AI-powered chat to ultimately decide where they want to eat.

It's the detailed, interactive version of designs that closely match the look and feel of the final product.

A clickable prototype was developed to simulate the full conversational search experience. The prototype demonstrates how users interact with the chat system, review recommendations, and navigate restaurant details.

City and cinema theater selection

Movies slideshow

List of movies + search option

Separate movie page, adding to favourites

Show selection: date and time, hall and seats

Adding selected seats

Calendar with results filtering

Menu and its sections

It's the detailed, interactive version of designs that closely match the look and feel of the final product.

The center stack display and auxiliary displays carry most of the information related to the vehicle itself. They work in tandem with the HUD since the information being displayed on the HUD will either be work or social/ entertainment related.

All of these screens were fully built out in Figma.

HUD and UI Settings

Environment Settings

Home (General Vehicle Settings)

Map Settings

Multimedia Settings

The project schematically :

The final outcome is a high fidelity web application with API functions built into the backend to simulate AI conversation with restaurant searching.

It's a structured scheme that outlines the pages and content hierarchy of the app.

The new and improved haptic knob was built completely in-house and allowed us to customize the force feedback of the knob based on certain scenarios. This means as a user steps through the UI experience, the knob can be programmed to feel different at each step of the UI.

This project demonstrated how conversational interfaces can simplify everyday decision-making processes such as restaurant discovery.

The platform concept improves restaurant discovery efficiency while supporting local businesses through enhanced visibility and menu-driven recommendations.

This project reinforced the importance of designing AI-powered interactions that remain intuitive and approachable for users.

Future work for this app include fully building out the database on the backend to hold restaurant information and tie in necessary APIs.

Conduct additional testing to refine conversational interactions

Explore group decision-making features for shared restaurant searches

Client

N/A

Year

2025

Tag

UI/UX

Duration

Ongoing

FlavorFinder is a web-based restaurant discovery platform that simplifies the process of finding places to eat through conversational search. Instead of scrolling through long lists of restaurants, users can describe what they are craving and receive personalized recommendations powered by AI.

The platform also supports smaller restaurants by partnering with them to provide richer menu data and increased visibility.

Restaurant discovery platforms often overwhelm users with too many options and rigid filtering systems, making it difficult to quickly decide where to eat.

Design a conversational discovery platform that simplifies restaurant search while helping smaller restaurants gain visibility through richer menu data and featured placement.

UX/UI Designer responsible for product concept, user research synthesis, interaction design, and interface design.

Defined the product concept and discovery workflow

Designed conversational search interactions

Developed wireframes and high-fidelity interface designs

Created the information architecture and user flows

Research focused on understanding how users currently search for restaurants and where friction occurs in the discovery process. Users frequently experience decision fatigue when browsing long lists of options and struggle to filter results based on nuanced preferences.

These insights highlighted an opportunity to simplify discovery through conversational interactions.

Users experience decision fatigue when browsing large restaurant directories

Traditional filters do not capture nuanced cravings or dietary preferences

Smaller restaurants struggle to gain visibility on large platforms

The platform primarily serves two user groups: diners looking for faster restaurant discovery and small restaurant owners seeking increased visibility. Users often search for restaurants in group settings where multiple preferences must be considered.

I developed a user journey map of a typical user's experience with searching for food to pinpoint potential pain points and identify areas for improvement.

The ideal journey begins with a user describing what they want to eat through conversational search. The system interprets their request and returns tailored recommendations that help users quickly decide where to eat.

Replace complex filtering with conversational search

Highlight small or local restaurants through featured partnerships

Use menu-level data to generate better recommendations

FlavorFinder transforms restaurant discovery from a frustrating browsing experience into a guided conversation. By combining AI search with detailed restaurant data, the platform helps users find food faster while increasing visibility for local businesses.

Early design exploration focused on translating conversational search into a clear interface structure. The challenge was to balance the AI chat interaction with familiar browsing elements so users still felt in control of the discovery process.

Concept sketches explored different homepage layouts and search entry points.

I began with drawing storyboards to focus on just the most important parts of a user’s experience with the app. It's a story showing a group a friends trying to figure out where they should go eat, and ultimately using FlavorFinder to get detailed recommendations on a nice local restaurant.

Next, I created a sitemap of the main functions and features of the website. The Search Interface and Partner Portal are largest features with many smaller functions built into each of them.

The core concept behind FlavorFinder was to shift restaurant discovery from browsing to conversation. Instead of navigating multiple filters, users can simply describe what they are looking for. Example prompts include:

“Find a cozy Italian place nearby”

“Good vegan lunch options”

“Spicy ramen within 10 minutes”

Then, I explored different layouts for the chat conversation and search results. All wireframes were built out using Figma.

This is an examination of users and their needs, which adds realistic context to the design process.

Early usability testing was conducted with a small group of participants to evaluate how easily users could understand and use the conversational search interface.

Participants were asked to complete a simple task: find a restaurant recommendation using the chat feature.

Users quickly understood the conversational search interaction

Some users initially overlooked the chat entry point on the homepage

Participants preferred seeing a small set of curated results instead of long lists

The refinement stage focused on improving visual hierarchy, clarifying the chat interaction, and optimizing the restaurant recommendation display.

Design iterations emphasized simplicity while maintaining clear access to supporting restaurant information.

High fidelity wireframes introduced the final visual language of the platform, including typography, spacing, and visual hierarchy. These screens refined the layout structure while ensuring the conversational interface remained the primary entry point for discovery.

This is the primary user flow that was tested on my prototypes. The flow involves a user starting on the FlavorFinder homepage and interacting with the AI-powered chat to ultimately decide where they want to eat.

A clickable prototype was developed to simulate the full conversational search experience. The prototype demonstrates how users interact with the chat system, review recommendations, and navigate restaurant details.

The final outcome is a high fidelity web application with API functions built into the backend to simulate AI conversation with restaurant searching.

This project demonstrated how conversational interfaces can simplify everyday decision-making processes such as restaurant discovery.

The platform concept improves restaurant discovery efficiency while supporting local businesses through enhanced visibility and menu-driven recommendations.

This project reinforced the importance of designing AI-powered interactions that remain intuitive and approachable for users.

Future work for this app include fully building out the database on the backend to hold restaurant information and tie in necessary APIs.

Conduct additional testing to refine conversational interactions

Explore group decision-making features for shared restaurant searches